Abstract

Arising from: K. Panchanathan & R. Boyd Nature 432, 499–502 (2004); K. Panchanathan & R. Boyd reply

Panchanathan and Boyd1 describe a model of indirect reciprocity in which mutual aid among cooperators can promote large-scale human cooperation without succumbing to a second-order free-riding problem2 (whereby individuals receive but do not give aid). However, the model does not include second-order free riders as one of the possible behavioural types. Here I present a simplified version of their model to demonstrate how cooperation unravels if second-round defectors enter the population, and this shows that the free-riding problem remains unsolved.

Similar content being viewed by others

Main

Suppose a population shows two types of behaviour. In each period, ‘cooperators’ pay a cost C to contribute to a public good and receive a benefit B. ‘Defectors’ also receive benefit B but do not pay the cost. Assuming growth of each type in the population is proportional to mean pay-offs, how can we explain the emergence and persistence of cooperators?

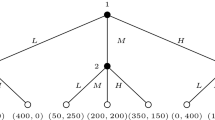

Panchanathan and Boyd propose that a first-round public-goods game is followed by a second-round ‘mutual-aid’ game. In this game, each ‘shunner’ cooperates in the first round and then pays a cost c to generate a benefit b to one randomly chosen shunner, which yields a pay-off of B−C+b−c. Shunners can invade and dominate a population of defectors if b−c>C. In other words, shunners will prevail if they can create an in-group net surplus from mutual aid in the second round that exceeds the cost of cooperation in the first round. However, this is only possible if shunners can exclude second-round defectors from mutual aid. Although Panchanathan and Boyd include in their model individuals who receive a benefit without paying the cost in the first round (defectors), they do not consider such a type for the second round. Thus, rather than solving the second-order free-rider problem, their model merely assumes it away.

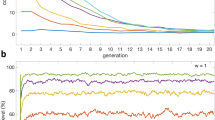

To see why, suppose that ‘second-round defectors’ enter the population. These individuals receive the same pay-off as shunners in the first round and are eligible to receive aid in the second round. However, they do not give aid to others. If we denote the proportion of shunners in the population by p, then the average pay-off for second-round defectors is B−C+bp and the average pay-off to shunners changes to B+C+bp−c. If second-round defectors invade a population of shunners, then second-round cooperation collapses, pay-offs to second-round defectors fall, and eventually ordinary defectors can invade and dominate the population — leaving us back where we started.

Panchanathan and Boyd implicitly acknowledge this problem when they note that each individual must have perfect information about the cooperation and aid-giving histories of all other members of the population in order for mutual aid to be sustained. In other words, shunners must be able to recognize and exclude second-round defectors from receiving aid. They do incorporate an error term into their model, but they do not consider errors in which individuals mistakenly help a recipient of bad reputation during the mutual-aid game1.

Suppose that e is the probability that shunners mistakenly aid a second-round defector or withhold aid from a shunner. Cooperation can only be maintained when the pay-off to shunners, B−C+bp(1−e)−c[p(1−e)+e(1−p)], is greater than the pay-off to second-round defectors, B−C+ebp, or

This means a population of shunners (p=1) is only evolutionarily stable if the error is sufficiently small

In other words, cooperation will unravel if second-round defectors cannot be detected most of the time. In contrast, a population of second-round defectors (p=0) is stable for any positive error rate and can resist invasion even when shunners are common. Thus, the emergence of shunners when they are rare cannot be explained by the authors' model1.

Note that the simple model presented here raises a broader concern with all models of indirect reciprocity3,4,5 and related experimental results6. Previous work has already shown that indirect reciprocity is stable only when donors have very reliable information about the behavioural histories of all individuals in the population4,7. But even this assumes that there is no evolutionary pressure on the reliability of information. If at least some acts of giving are not observable, then individuals may have an incentive to misrepresent their behavioural histories in order to secure the benefits of indirect reciprocity without paying the costs. Considering a human context in particular, what keeps these individuals from evolving deceptive behaviours that would reduce the reliability of information and allow them to benefit from aid without providing it?

References

Panchanathan, K. & Boyd, R. Nature 432, 499–502 (2004).

Fehr, E. Nature 432, 449–450 (2004).

Nowak, M. A. & Sigmund, K. Nature 393, 573–577 (1998).

Panchanathan, K. & Boyd, R. J. Theor. Biol. 224, 115–126 (2003).

Alexander, R. D. The Biology of Moral Systems (de Gruyter, New York, 1987).

Milinski, M., Semmann, D. & Krambeck, H. J. Nature 415, 424–426 (2002).

Nowak, M. A. & Sigmund, K. J. Theor. Biol. 194, 561–574 (1998).

Author information

Authors and Affiliations

Rights and permissions

About this article

Cite this article

Fowler, J. Second-order free-riding problem solved?. Nature 437, E8 (2005). https://doi.org/10.1038/nature04201

Published:

Issue Date:

DOI: https://doi.org/10.1038/nature04201

This article is cited by

-

Egoistic punishment outcompetes altruistic punishment in the spatial public goods game

Scientific Reports (2021)

-

Conditional punishment is a double-edged sword in promoting cooperation

Scientific Reports (2018)

-

Building the Leviathan – Voluntary centralisation of punishment power sustains cooperation in humans

Scientific Reports (2016)

-

Increasing returns to scale: The solution to the second-order social dilemma

Scientific Reports (2016)

-

Sustainable institutionalized punishment requires elimination of second-order free-riders

Scientific Reports (2012)

Comments

By submitting a comment you agree to abide by our Terms and Community Guidelines. If you find something abusive or that does not comply with our terms or guidelines please flag it as inappropriate.