Abstract

Background:

A third version of the Spinal Cord Independence Measure (SCIM III), made up of three subscales, was formulated following comments by experts from several countries and Rasch analysis performed on the previous version.

Objective:

To examine the validity, reliability, and usefulness of SCIM III using Rasch analysis.

Design:

Multicenter cohort study.

Setting:

Thirteen spinal cord units in six countries from North America, Europe, and the Middle-East.

Subjects:

425 patients with spinal cord lesions (SCL).

Interventions:

SCIM III assessments by professional staff members. Rasch analysis of admission scores.

Main outcome measures:

SCIM III subscale match between the distribution of item difficulty grades and the patient ability measurements; reliability of patient ability measures; fit of data to Rasch model requirements; unidimensionality of each subscale; hierarchical ordering of categories within items; differential item functioning across classes of patients and across countries.

Results:

Results supported the compatibility of the SCIM subscales with the stringent Rasch requirements. Average infit mean-square indices were 0.79–1.06; statistically distinct strata of abilities were 3 to 4; most thresholds between adjacent categories were properly ordered; item hierarchy was stable across most of the clinical subgroups and across countries. In a few items, however, misfit or category threshold disordering were found.

Conclusions:

The scores of each SCIM III subscale appear as a reliable and useful quantitative representation of a specific construct of independence after SCL. This justifies the use of SCIM in clinical research, including cross-cultural trials. The results also suggest that there is merit in further refining the scale.

Similar content being viewed by others

Introduction

The Spinal Cord Independence Measure (SCIM) is the only comprehensive ability rating scale that has been designed specifically for patients with spinal cord lesions (SCL). The instrument focuses on performing everyday tasks, and captures the economic burden of disability as well as the impact of disability on the patient's overall medical condition and comfort.1 The validity, reliability, and advantages of the first two versions (SCIM I and SCIM II) have been shown in several publications,1, 2, 3, 4, 5, 6, 7 demonstrating that SCIM II can be used for clinical purposes and outcome assessment in research.8

Following an in-depth psychometric analysis of SCIM II through Rasch modeling,1 comments by staff members and experts from several countries, and the need to address intercultural biases, a third version (SCIM III) was formulated. The SCIM III scale, presented in the Appendix, is a profile comprising three complementary subscales consisting of 6, 4, and 9 items, respectively. Each item represents a daily task. Items are graded for increasing difficulty (requiring higher patient ability); 2–9 grades are available for each item, the higher, the better the patient's performance or independence. The subscales cover the related but distinct domains of self-care (with a score range of 0–20), respiration and sphincter management (0–40), and mobility (0–40). Total score ranges between 0 and 100. The mobility subscale consists of two subscales: one for ‘room and toilet’ and one for ‘indoors and outdoors, on even surface.’

To examine the validity and usefulness of SCIM III in a multicultural set-up, the SCIM III subscales were tested in a multicenter international study using Rasch analysis as the leading psychometric approach.

Patients

Rehabilitation patients from 13 spinal units in six countries in North America, Europe, and the Middle-East were enrolled consecutively. Inclusion criteria were a SCL (ASIA impairment grade A, B, C, or D) and age ≥18 years. Patients with concomitant impairments such as brain injury or mental disease, or any other condition that might influence their everyday function were excluded.

Four hundred and twenty-five patients were included, 309 men and 116 women, 188 with tetraplegia, and 237 with paraplegia. Mean age was 46.93 years (SD=18.17). ASIA Grade was A in 151 patients (35.5%), B in 59 (13.9%), C in 92 (21.6%), and D in 119 (28%). Lesion etiology was traumatic in 261 patients (61.4%) and nontraumatic in 164 (38.6%).

The nontraumatic etiologies were spinal stenosis in 23 patients (5.4%), benign tumor in 27 (6.3%), disc protrusion in 25 (5.9%), myelopathy of unknown origin in 16 (3.8%), vascular impairment in 16 (3.8%), syringomyelia in 5 (1.2%), decompression sickness in 3 (0.7%), multiple sclerosis in 2 (0.5%), congenital anomaly in 2 (0.5%), spinal abscess in 2 (0.5%), metastatic disease in 2 (0.5%), and other in 41 patients (9.6%).

Demographic and clinical information related to patient data in each participating unit is provided in Table 1a–c.

Methods

Scoring procedure

All patients were evaluated with the SCIM III questionnaire within a week after the beginning of the rehabilitation program and within the week before discharge from the unit or department. The current analysis refers only to the data obtained at admission. Each SCIM III item was scored by direct observation, by expert professionals selected at each unit (a physician, nurses, occupational therapists, or physiotherapists). In exceptional cases, as for example in the case of bowel habits for which direct observation was troublesome, some specific tasks could be scored, according to the information obtained from a staff member who had been observing the patient during routine care. Patient data and SCIM III scores were collected in each participating unit, entered into Excel files, e-mailed to the first two authors, and pooled for analysis.

Data analysis: Rasch modeling

Questionnaires are only a proxy for measurement

Cumulative questionnaires are aimed at measuring whole-person variables such as behaviors, attitudes, or perceptions, starting from the count of behavioral observations or of answers to questions (‘items’).9 Unfortunately, it is well known that summed raw scores (ie, counts of observations) can be very far from a true linear measure. Usually they demonstrate good reproducibility in test–retest trials, but they often lack fundamental properties such as unidimensionality and linearity.10 This reflects the intrinsically arbitrary coupling between discrete numbers and the underlying continuous concepts (in which numbers are assigned to ‘less’ or ‘more’ of a given variable).11

The Rasch model solution to the score-measure inconsistency

The formal solution to the problems described above came from the Danish mathematician Georg Rasch, who died at 1980.12 Starting from the questionnaire's raw scores, Rasch analysis provides theoretical expectations, that is, how should the percentage of pass/fail responses be to best comply with the requirement of a unidimensional, linear measure (the so-called Rasch model).12, 13, 14, 15 This implies (1) an estimate of the measures of the subjects’ ability and of the difficulty levels of the items, independent of each other; and (2) the assumption that more able subjects are ascribed a greater probability to pass any item than are less able subjects (mirror reasoning applies to more/less difficult items, facing subjects). Because the actual data can be contrasted with sound theoretical expectations, the Rasch validation of a questionnaire is more stringent and the Rasch-compatible scores are more valid than those provided by any conventional data-referenced psychometric analysis. This is why Rasch analysis has been claimed to bridge the gap between behavioral and bio-medical measurements.16 The stringent theoretical expectations are mitigated by the fact that the model is probabilistic in nature: it also expects some error around its estimates.

Linearity of measures

Raw score units represent unknown distances, despite numeric uniformity (mild to moderate=1 may represent a substantively different interval than moderate to severe=1). They are also discrete (intermediate values remain unknown). Rasch measures are linear11, 12, 13 in that its units are invariant. These are called ‘logits’ (familiar to statisticians), because they come from log-transformation of pass/fail probabilities (log-odd). Proportions of ‘pass’ and ‘fail’ observed in the data matrix are the intuitive starting point for the estimation of ability and difficulty, mirror concepts measured in logits: subjects are more able the more they pass difficult items. Items are more difficult the more they resist able subjects. The Rasch model creates theoretically expected ‘pass’ and ‘fail’ proportions (ie, modeled probabilities). These are not linear, however, until they are logit-transformed (for instance, they are bound between 0 and 1, while ability and difficulty may span – to + infinite; see Andrich13 and Wright and Masters15 for technicalities). A ‘0’ logit measure is conventionally assigned to the mean difficulty of items (negative values mean less difficult than the mean). Subject's ability is given as the logit difference with respect to this ‘0’ value. To remain in touch with the more familiar probabilities, one can always relate logits to pass probabilities: for instance, a difference of 0, 1, and 2 logits between subject ability and item difficulty implies 50, 75, and 88% pass probability.

Agreement (fit) between actual and expected responses

Unlike raw scores, which are discrete counts of events, Rasch measures are continuous. In each subject–item interaction, score 0 or 1 becomes the likelihood (the expected score, bounded between 0 and 1) that 1 was observed. The differences between the observed (eg, 1) and the ‘expected ‘ (eg, 0.73) scores are algebraically manipulated and become at first ‘residuals’ and ultimately a summary index of data-model agreement (‘fit’ or – a matter of viewpoint – ‘misfit’). ‘Fit’ means that the amount of disagreement cumulated across response strings is small enough to allow the inferences that (1) the raw scores are an acceptable surrogate of a true linear measure, and (2) they are model-consistent and thus generalizable to future model-consistent samples. Because of its probabilistic nature, misfit is signaled only beyond confidence limits defined according to conventional statistics.14, 15 ‘Fit’ analysis is used extensively in this study. The Rasch model, originally conceived for dichotomous (no/yes, 0/1) items, has become a family of equations applicable to questionnaires with ‘polythomous’ items (so-called ‘rating scales’): 0/1/2, no/mild/moderate, alone/with supervision/with assistance, and even to contexts involving parallel raters (Linacre's ‘many-facet’ models).17

Rasch analysis: specific applications to this study

Although Rasch analysis of SCIM II was performed on four subscales,1 preliminary results of the present study showed that good metric properties can be achieved when the two mobility subscales are collapsed into a single mobility subscale. Therefore, in this study we analyzed three subscales of the SCIM III profile.

The SCIM III scale includes polythomous items. The data analyzed for each patient were that scored by one rater on admission to the Spinal Unit. The Andrich rating scale model was adopted.13 The technicalities of Rasch modeling are now accessible to lay professionals in simple textbooks18, 19 and in articles conceived for professionals working in diverse fields such as education20 and rehabilitation.9 Several dedicated and increasingly user-friendly software programs are also available (see www.rasch.org for an overview on software and training opportunities).

Matching between the scale and the patients’ sample (Rasch ruler)

The item difficulties, and at a higher resolution the difficulty of each category-grade within the items, can be represented as ticks along a ruler. Graphic display of a ‘ruler map’ (see Figures 1a–c and 3a–c) allows easy comparison of the reciprocal targeting of subjects and items, and comparison of the spread and density of the ‘ticks.’

(a) The distribution of persons (left) and items (right) along the self-care person ability and item difficulty values, measured on a logit scale (more able subjects and more difficult items on top). The map indicates that item difficulty is about evenly distributed among the degrees of difficulty, between −3 and +3 logits, feeding being the easiest and dressing lower body the most difficult, and that patient mean ability matches item mean difficulty. Patient ability is distributed rather symmetrically between −5.5 and +5 logits. The distribution of ability levels tends to normality, but there is an aggregation of patients with very low ability around the −6 logit level. EACH ‘#’=6 persons; ‘.’ (dot)=1 person; +=one logit distance; M=Mean; S=1 SD; T=2 SD. (b) The distribution of persons (left) and items (right) along the respiration-sphincter person ability and item difficulty values, measured on a logit scale (more able subjects and more difficult items on top). This map indicates that sphincteric item difficulty is about evenly distributed among the degrees of difficulty, between 0.5 and 2 logits, bladder management being the easiest and toileting the most difficult, but respiration management is far easier, about −4 logits. Patient mean ability matches item mean difficulty. Patient ability is about symmetrically distributed for most of the patients among the degrees of ability, between −4 and +4 logits. The distribution of ability levels tends to normality, but more than half the patients are less able than the mean, and many of them aggregate between −2 and 0 logits. It seems that the combination of respiration and sphincter management in one scale creates an artificial impression of difficulty of the sphincteric tasks and of lower patient ability in performing these tasks. EACH ‘#’=7 persons. (c) The distribution of persons (left) and items (right) along the mobility person ability and item difficulty values, measured on a logit scale (more able subjects and more difficult items on top). This map indicates that mobility item difficulty is about evenly distributed among the degrees of difficulty, between −3.5 and +3 logits, mobility in bed being the easiest and stair management the most difficult. Patient ability is distributed among the degrees of ability, between −7 and +7 logits. The distribution is asymmetrical, with a long ‘tail’ at higher levels of ability. Many patients aggregate between −7 and −2 logits. Each ‘#’=10 persons

The so-called ‘threshold imprint’ for each of the 3 SCIM III scales (labels a), (b) and (c) refer to Self-care, Respiration-sphincter and Mobility scales, respectively). Subjects’ ability levels (above) and item difficulty levels (below) are aligned along the shared continuum (both parameters increase rightwards). The bars give, on the ordinate, the frequency of subjects falling within increasing 1-logit bins. The lower abscissae each refer to an item (see labels). The corresponding thresholds are located at the corresponding values (1 represents the ‘threshold’ at which either the first or the second categorical score are equally likely, 2 represents the threshold at which either the second or the third categorical scores are equally likely, etc.). The difficulty levels of thresholds from different items can intermingle or even overlap. For instance, in the self-care scale the 1st threshold of the 6th item lies at a value between the 1st and 2nd threshold of the 1st item. Threshold ‘disordering’ can also be easily detected: for example in the Respiration scale, the third threshold is located left of the second one. Item 9 is dichotomous and its value equals that of the item difficulty, which is presented in Figure 1. Overall, in all the three SCIM III subscales, there is a satisfactory match between the location of the mean difficulty of the whole set of thresholds, their distribution (spread and density) below the y-axis, and the distribution of patient ability levels above the x-axis. This supports the validity and the practical usefulness of the SCIM III ‘rulers’

Reliability of patient ability measures, separability, and discernible strata

Reliability reflects the degree to which the differences across patient measurements are due to actual differences in ability rather than to measurement error (ie, the ratio [true variance/(true+error) variance]). The ‘real reliability index’ adopted in this study is rather conservative and has an ideal maximum value of 1. The ‘separability index’ and the number of ‘discernible strata’ are related concepts. They reflect the degree to which measurements (levels of ability in this study) differ not only in absolute but also in statistical terms. Measurements within the same ‘statistical’ stratum do not differ significantly (in this study, at P<0.05). The higher the reliability, the higher the number of strata. If the index is >0.7 at least two strata are discernible14, 15 and the hypothesis that all differences in ability measurements reflect only randomness can be rejected.

Fit computation

The concept of fit relates to the agreement between observed scores and the scores expected by the Rasch model. The underlying question is whether the model expectations are reasonably justified by the actual raw data from which they were extracted. Usually, ‘fit’ is estimated for a person's string of responses given to items, or for an item's string of responses obtained from persons. However, it can also be estimated for the expected scores corresponding to a given response ‘category’ (eg, was score ‘2’ actually observed whenever it was likely/expected?). Mean fit indices can be computed across series of persons or items. In this study, fit was computed as either ‘outfit’ or ‘infit’ indices21 assigned to individual persons and items. Outfit is outlier-sensitive (highly unexpected responses, however rare, have a strong impact). The infit index is more sensitive to multiple slightly unexpected responses. In this study, both indices are given in their ‘mean-square’ (MNSQ) form (see Smith21 for technicalities). The model-expected variance gives rise to the ideal MNSQ fit index=1. Above 1 (more variance than the model expects) ‘misfit’ begins. Below 1, fit is deemed to be acceptable anyway (although it may flag too predictable responses, hence the redundancy of the ‘overfitting’ item).

Misfit: how much is too much?

There are no mandatory fit limits. If a statistical criterion is adopted, expected misfit is acceptable. In this view, a range of fit acceptable at P<0.05 for either persons or items is 0.8–1.4 for samples of the size used in this study.21

Differential item functioning

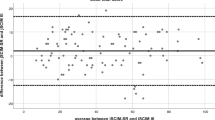

Differential item functioning (DIF) (also called differential test functioning or ‘item bias’) is a more subtle yet fundamental way to assess fit. DIF explores the stability of the hierarchy of item difficulty across classes of observations. In this study, the SCIM III subscales were DIF-tested across meaningful classes of subjects such as men/women, low/high ability subjects, older/younger subjects, and across countries. When the mean item difficulty is contrasted across two classes of patients, location of the difficulty measures should fall within the 95% confidence bands surrounding the identity line (Figure 4).15, 18, 19

The hierarchy of item difficulty (logit units in either the abscissa or the ordinate) is contrasted across classes of subjects. The left, middle, and right column of panels refer to the self-care, respiration-sphincters, and mobility subscales, respectively. Items are represented by their numeric symbols (item sequence in the questionnaire). In each row, patients are divided into two groups according to age (above/below median on the abscissa and on the ordinate, respectively), gender (female/male), class of ability (median or above/below median), etiology (nontraumatic/traumatic), lesion level (other/cervical C1–6). The dotted curves represent the 95% confidence bands along the identity line. Below-diagonal items are relatively more difficult (higher location in the item hierarchy) for the class of subjects given in the abscissa (mirror reasoning applies for above-diagonal items)

Six countries were included in this study, making the analysis of country-DIF more complex. The average difficulty of each item within each subscale was compared between each country and the set of all the countries. The effect of the item–country interaction was investigated through ANOVA. The difference in average difficulty of SCIM III items between each country and the set of all the countries (so-called item bias) was used to assess the robustness of the SCIM III subscales with respect to national-cultural differences.

Testing the strength of the unidimensionality assumption

Ideally, items within a questionnaire should represent a unique shared construct (or a ‘latent’ person's variable), allowing their scores to be summated. For instance, the SCIM scores for walking and climbing stairs in a young patient should change as a function of the patient's overall underlying ability more than they change because of extraneous variables, such as the rater's tendency to overscore younger patients.

The fit indices reflect unidimensionality, although they do not represent it directly. Unidimensionality can be further investigated through factor analysis. To avoid confusion, it must be emphasized that the analysis actually includes two steps (see Results, Unidimensionality).

First, the variance explanation provided by the expected scores (the so-called ‘Rasch-factor’) is compared with the total score variance. Second, factor analysis is performed on the ‘residual’ variance. The question is: do the residuals conceal any hidden, structured ‘factor-variable’ rather than being randomly distributed? Finding hidden ‘factors’ in the residuals is less relevant the lower the amount of variance left unexplained by the ‘Rasch-factor.’

Conceptual consistency of the categories within the items

SCIM III includes items graded on several levels. The underlying assumption is that in any item, a category represented by a higher number (eg, grade 4 rather than 3) represents ‘more’ of the person's variable, and thus it is selected by fewer and more able people than a category represented by a lower number. Perhaps a more implicit expectation is that subjects should not ‘by-pass/jump-over’ adjacent categories, while their ability increases. How much these expectations are met can be analyzed both graphically and numerically (see Figure 2 and Table 3). Failure to meet these expectations invites diagnostic investigation of the usefulness and conceptualization of the categories themselves.

(a) The items feeding, bathing, and grooming share the same category structure within the self-care SCIM scale. The probability of observing a given response (category or score) is plotted on the ordinate against the ability level to perform a specific task, which is expressed as the difference between overall patient ability and mean item difficulty (both in logit units). For comparison, mean item difficulty is always set at 0 for any item. The ability level at which adjacent categories have the same probability to occur is called ‘threshold.’ It may be seen that each category is most likely (it ‘emerges’) along an even range of ability levels. As a consequence of the ‘hilly’ pattern, threshold locations between categories are ordered in the intended way (eg, a greater ability is expected when score 2 becomes more likely than 1, and an even greater ability is expected when score 3 becomes more probable than score 2). This is an example of items with ‘ordered categories’ (or more properly, ‘ordered thresholds’). (b) The same type of plot as in (a). Unlike the self-care items, one of the categories (category 8) is never the most likely to be observed, whatever the ability level of the patients is. This category does not ‘emerge,’ so that the expected ‘hilly’ pattern is distorted, which implies ‘disordering’ of the threshold locations. For instance, the 8/10 intersection (threshold) occurs at a functional ability level lower than that required to pass the 5/8 threshold. Some patients who improve their overall ability (compared to the average item difficulty) beyond 0.3 logit (8/10 threshold) will most likely progress from score 8 to 10. When they improve further beyond 2.1 logit, they will most likely regress from score 8 to score 5 (5/8 threshold). This contradicts the intended meaning of categories 5, 8, and 10, which should represent increasing levels of performance. This is an example of ‘disordered categories’ within an item

Software programs

Rasch and Factor analyses were performed using MJ Linacre's Winsteps software (version 3.55, 2005). ANOVA for DIF across countries was performed using MJ Linacre's FACETS software (version 3.55, 2005).

Results

Rasch ruler

The ‘person–item map’ (Rasch ruler, see Matching between the scale and the patients’ sample) is displayed in Figure 1a–c. The figure shows that in all SCIM III subscales, the distribution of item difficulty grades matched the distribution of person ability measurements in both targeting (comparing mean ability and difficulty measurements) and spread. Some floor effect is seen in the mobility subscale.

Reliability and separability of ability estimates

The real person reliability index (see Reliability of patient ability measures, separability, and discernible strata) was 0.88 for the SCIM III self-care subscale, 0.76 for the respiration and sphincter management subscale, and 0.91 for the mobility subscale. Separation index values were 2.77 for Self-care, 1.77 for Respiration and sphincter management, and 3.15 for Mobility. The separation indices values imply that at P<0.05 the measurement process succeeded in distinguishing four strata of person abilities for self-care, three for respiration and sphincter management, and four for mobility.

Data-model fit

The mean item infit index (see Fit computation) was 0.92 for the overall self-care subscale, 0.79 for the respiration and sphincter management subscale, and 0.94 for the mobility subscale. Outfit was 1.06, 0.98, and 0.89, respectively. Individual item and category fit was also satisfactory in general, as shown in Table 2a–c, where the problematic fit values (>1.4) are shown in bold. Infit deserves more attention because it is less affected by erratic outliers. Some infit-type misfit affected the mobility outdoors and stair management items. Within the categories (the grades within items), high infit values were found for category 5 in use of toilet, for category 5 in the set of three mobility items, and for categories 0 and 3 in the stair management item.

Unidimensionality

Factor analysis of the residual variance supported the unidimensionality of the SCIM III subscales (see Methods, Testing the strength of the unidimensionality assumption). Most of the observed score variance was explained by the model-expected measurements of ability: 98.8% for self-care, 99.2% for respiration and sphincter management, and 98.8% for mobility, respectively. Within the small ‘unexplained’ percentages (residual variance), about one third, namely 0.4, 0.3, and 0.4% of the total variance, was explained by the strongest extractable factor. This means that only a negligible fraction of the SCIM score variance could not be explained by ability measurements, and that this part could be ascribed mostly to randomness and not to any extraneous hidden variable.

Category ordering

Category thresholds (see Methods, Conceptual consistency of the categories within the items) were in proper hierarchical ordering in most of the items. In all items of the self-care subscale all of the categories emerged, that is, they were the most likely response for a range of ability levels (Table 3a and Figure 2a). Most of the categories in the sphincter-respiration and mobility subscales were also emergent, but disordered categories were observed in some of their items (Tables 3b and c).

The item bowel management of the sphincter-respiration subscale provides an example of disordered thresholds (Figure 2b). Among the categories used to grade this item, only categories 0, 5, and 10 ‘emerge’ sharply, and the category scored 8 is clearly nonemergent. The transition from score 8 to 10 occurs at a lower level of ability than the transition from score 5 to 8, contradicting the intended meaning of the categories.

Threshold imprinting

Figure 3a–c show the distribution of categories along the Rasch ruler (see Methods, Differential item functioning). The numerous ‘ticks’ improve remarkably the match between difficulty and ability levels compared with the Rasch ruler made up by mean item difficulties only (Figure 1a–c). A concern is raised by the floor effect of the mobility subscale, where patient abilities tend to crowd around low ability levels.

Differential item functioning

DIF (see Methods, Differential item functioning) was of minor relevance for most items across contrasted subgroups of age (above/below median), gender, class of ability (above/below the median measure), lesion etiology (traumatic/nontraumatic), and lesion level (cervical/other) (Figure 4). This holds for either the Self-Care, Respiration-Sphincter, and Mobility subscales. In most contrasts, item difficulty values in the X–Y plots lay within the 95% confidence bands surrounding the identity line.15 This should be seen as ‘indicating the invariance of the Rasch measures across the sub-groups of interest’22 The only prominent deviation from this pattern is the DIF between patient groups with low or high overall mobility SCIM scores (Figure 4, right column, center panel: DIF by class of ability). The bed-mobility and transfer items are relatively more difficult for patients with below-median mobility SCIM scores, while the locomotor items (mobility indoors and outdoors) are relatively more difficult for patients with below-median mobility scores.

Figure 5 shows the differences in the average difficulty (on the ordinate) of SCIM III items (on the abscissa) between each country and the set of all countries (so-called item bias). This difference did not exceed 1 logit for most items. For the self-care items (Figure 5a), the bias range was −1.7 to +1.1 logit, and the bias was significant (P<0.05) in only 15 out of the 36 contrasts (6 items × 6 countries), taken one at a time, between country-specific and all-countries difficulties. For the sphincter-respiration items (Figure 5b), the bias range was −1.46 to +0.68 logits. Only six out of 24 potential contrasts (4 items × 6 countries) showed a significant bias. The most prominent bias values were those of Germany for respiration and bowel-management. For the mobility items (Figure 5c), the bias range was −2.83 to +1.52 logits. Only in 13 of 54 potential contrasts (9 items × 6 countries) was the bias significant. The most prominent bias values were those of Germany and Israel for ground-wheelchair transfers.

The figure depicts the difference in average item difficulty (‘bias’ in logit unit, on the ordinate) between each country and the set of all the countries. The average difficulty of each item (upper abscissa) across the pool of countries (see symbols) was arbitrarily set at 0. Panels a, b, and c refer to the self-care, sphincter-respiration, and mobility subscales, respectively

Discussion

Rasch analysis indicated that SCIM III subscale measurements are valid and reliable measures of the underlying hypothesized construct, which is the ability of patients with SCL to perform relevant daily tasks. The three scales represent a valid profile of patient's functional status. This has been shown through: (a) the reliability and fit estimates, the nearly ordered category structure, and the substantive absence of DIF across clinically relevant sub-groups of patients; (b) the substantive match between the distribution of difficulty levels and patient abilities, which supports the practical usefulness of the instrument when applied to SCL patients; (c) the analysis of DIF between the difficulty of items in each country and the aggregated values across countries, which displays a substantive metric equivalence of the instrument across the 6 countries participating to the study.

Cross-cultural equivalence is a key-outcome of this study. The significant bias values recorded in the study seem to be acceptable. Reaching significance (P<0.05) was facilitated by omitting any correction (eg, Bonferroni correction) for the multiple-comparison model; therefore, the study reports the ‘worst-case’ scenario. Moreover, most of the comparisons did not show significant differences despite the lack of p-correction. Note that the same range of country-bias was found for similar items in stroke patients, using a valid standard instrument for generic disability measurement, the Functional Independence Measure (FIM™).23

Rasch analysis also highlighted some flaws, suggesting a need for further refinement of the scale. These include some items and categories that systematically elicited unexpected responses leading to significant misfit although, on average, the mean item fit across each subscale was satisfactory and it supported the clinical use of its cumulative scores.

Given the number of items and categories tested for fit (Table 2a–c), from a statistical perspective it is not surprising that a few of them show misfit beyond the proposed cutoff of 1.4 (corresponding to the upper 95% CI of expected fit values). Items and categories with outfit-type misfit presumably are strongly influenced by exceptional outliers. This is a useful flag for the local rater (who were the ‘deviant’ cases, and why?) but it is hardly an indication of any scale malfunctioning. Items showing infit>1.4 are found only in the walking outdoor and stair management items, within the Mobility subscale (Table 2b). Infit-type misfit is also found within the Respiration/Sphincter subscale, for category 5 (full independence in toilet use). Perhaps because of the paucity of fully independent patients, the misfit of this category is unable to drag the entire item into misfit. Infit-type misfit suggests a hidden systematic bias. What is the origin? The answer is perhaps in Figure 4, right column, where the mobility DIF between high- and low-performers is represented. Paradoxically, low-performers find upper limb related items (such as mobility in bed and transfers) more difficult than locomotory items. On the same row it can be seen that use of toilet is also more difficult than other items for low-performers. This functional profile can be attained, for instance, by some persons with tetraplegia (who present with overall low scores but are still capable of locomotion through an electric wheel-chair), or by patients suffering from central SCLs affecting the motion of the upper limbs while leaving some form of gait still possible.

If this interpretation holds (and misfit results from an impairment-based DIF), a change in SCIM III mobility items or their ‘split’ into impairment-specific items23 may be recommended. The same holds for the use of toilet item (at least in its category ‘5’).

When the category ordering was analyzed, problems were found in several items belonging to the Respiration/Sphincter and Mobility subscales (Table 3), where some categories were used less frequently than expected. The interpretation is not straightforward. Either the condition described by a category is rarely observed in practice (perhaps owing to clinical reasons or to reasons of ‘privacy’), or the raters tended to simplify the scoring procedure by selecting only neighboring categories, or both.

‘Empty’ categories do not necessarily threaten the validity of the measurement process. They burden the questionnaire uselessly, but may have the advantage of marking clinically significant steps toward recovery, if the questionnaire is also used in individual cases for goal setting and a checklist of benchmarks toward progress.

The crowding of patient ability levels around the bottom of the mobility ‘rulers’ is an additional problem of the Mobility subscales. This finding may be due to the fact that only ratings at admission were considered. It is expected that mobility levels are much higher at discharge. In any case, the less-than-complete targeting of the SCIM items suggests a need for addition of new well-fitting items, at least at the bottom end of the SCIM mobility ruler.

Rater bias also needs to be addressed. Various professional profiles were engaged in the present study, in unbalanced proportions (nurses and occupational therapists far outweighed in number physiotherapists and physicians), which may have added variance (rater-DIF?) to the estimation of difficulty of mobility versus self-care items, for example.

Other limitations of the study follow from enrollment of spinal units from only six countries.

Future participation of units from other countries may further challenge the cross-cultural validity of SCIM. Additionally, the fact that the SCIM developers coordinated the study might represent a bias toward favorable results. However, data analysis and interpretation were led by the third author, who was not involved in developing SCIM, coordinating the study, or collecting the data.

All the above shortcomings must be addressed in future refinements of the instrument. Useful suggestions regarding the possible removal, splitting or rephrasing of some items or categories have already emerged from the present study.

Overall, the international study supports the validity, reliability, and usefulness of the SCIM III profile within a multicultural setup, and it indicates that the instrument, made up of three valid sub-scales, can be safely introduced into clinical trials, including international multicenter studies.

References

Itzkovich M et al. Rasch analysis of the Catz-Itzkovich spinal cord independence measure. Spinal Cord 2002; 40: 396–407.

Catz A, Itzkovich M, Agranov E, Ring H, Tamir A . SCIM – spinal cord independence measure: a new disability scale for patients with spinal cord lesions. Spinal Cord 1997; 35: 850–856.

Catz A et al. The Catz-Itzkovich SCIM: a revised version of the Spinal Cord Independence Measure. Disability Rehabil 2001; 23: 263–268.

Catz A et al. SCIM – spinal cord independence measure (version II): sensitivity to functional changes. Harefuah (Hebrew) 2002; 141: 1025–1031.

Catz A et al. Disability assessment by a single rater or a team: a comparative study with the Catz-Itzkovich spinal cord independence measure. J Rehabil Med 2002; 34: 226–230.

Itzkovich M et al. Reliability of the Catz-Itzkovich Spinal Cord Independence Measure assessment by interview and comparison with observation. Am J Phys Med Rehabil 2003; 82: 267–272.

Catz A, Itzkovich M . Trends in the assessment of functional outcomes after spinal cord lesions. In: Soroker N, Ring H (eds) Advances in Physical and Rehabilitation Medicine. Monduzzi: Bologna 2003, pp 123–127.

Popovic MR, Thrasher TA, Adams ME, Takes V, Zivanovic V, Tonack MI . Functional electrical therapy: retraining grasping in spinal cord injury. Spinal Cord 2006; 44: 143–151.

Tesio L . Measuring person's behaviours and perceptions: Rasch analysis as a tool for rehabilitation research. J Rehabil Med 2003; 35: 1–11.

Luce RD . Dimensionally invariant numerical laws correspond to meaningful qualitative relations. Philos Sci 1978; 45: 1–16.

Wright BD, Linacre JM . Observations are always ordinal; measurements, however, must be interval. Arch Phys Med Rehabil 1989; 70: 857–860.

Rasch G . Probabilistic Models for Some Intelligence and Attainment Tests 1960. Danish Institute for Educational research. Expanded edition with foreword and afterforeword by Wright BD The University of Chicago Press: Chicago 1980 (Reprinted by MESA Press: Chicago 1993).

Andrich D . Rasch Models for Measurement. Sage Publications: Newbury Park, CA, USA 1998.

Wright BD, Stone MH . Best Test Design. Rasch measurement. MESA Press: Chicago 1979.

Wright BD, Masters GN . Rating Scale Analysis. Rasch Measurement. MESA Press: Chicago 1982.

Tesio L . Measurement in clinical versus biological medicine. The Rasch model as a bridge on a widening gap. J Appl Meas 2004; 5: 362–366.

Linacre JM, Wirght BD . Construction of measures from many-facet data. J Appl Meas 2002; 3: 486–512.

Bond TG, Fox CM . Applying the Rasch Model. Fundamental Measurement in the Human Sciences. Erlbaum Publishers: Mahwah, NJ 2001.

Penta M, Arnould C, Decrunayère C . Développer et Interpreter Une Échelle de Measure. Applications du Modèle de Rasch. Mardaga Ed Hayen: Belgium 2005.

Wright BD, Mok M . Rasch models overview. J Appl Meas 2000; 1: 83–106.

Smith RM . Fit analysis in latent trait measurement models. J Appl Meas 2000; 1: 199–218.

Bond TG . Validity and assessment: a Rasch measurement perspective. Metodologia de las Ciencias del Comportamiento 2003; 5: 179–194.

Tennant A et al. Assessing and adjusting for cross-cultural validity of impairment and activity limitation scales through differential item functioning within the framework of the Rasch model: the PRO-ESOR project. Med Care 2004; 42 (Suppl): 37–48.

Acknowledgements

This study was supported by the Unit of Medical Services, Rehabilitation Department, Israel Ministry of Defense, Toronto Rehab, and The Rick Hansen Man In Motion Foundation Research Fund. We thank Viviana Rota, Eng, from Milan, Italy, for providing helpful assistance in graphic editing. Our special thanks go to Hanne Vest Hansen, RN, from Copenhagen, Denmark, Chris Wilson (Consultant Clinical Psychologist), Mary Watkins (Assistant Psychologist), Sister Maryse Mackenzie, and Mr S Jallul, Specialist Registrar, from Oswestry, UK, to Ora Philo, RN, from Raanana, Israel, to the staff members of all the participating units, and to all the patient volunteers who took part in the study.

Author information

Authors and Affiliations

Appendix The Spinal Cord Independence Measure, Version III

Appendix The Spinal Cord Independence Measure, Version III

Rights and permissions

About this article

Cite this article

Catz, A., Itzkovich, M., Tesio, L. et al. A multicenter international study on the Spinal Cord Independence Measure, version III: Rasch psychometric validation. Spinal Cord 45, 275–291 (2007). https://doi.org/10.1038/sj.sc.3101960

Published:

Issue Date:

DOI: https://doi.org/10.1038/sj.sc.3101960