Abstract

This study delves into expressing primary emotions anger, happiness, sadness, and fear through drawings. Moving beyond the well-researched color-emotion link, it explores under-examined aspects like spatial concepts and drawing styles. Employing Python and OpenCV for objective analysis, we make a breakthrough by converting subjective perceptions into measurable data through 728 digital images from 182 university students. For the prominent color chosen for each emotion, the majority of participants chose red for anger (73.11%), yellow for happiness (17.8%), blue for sadness (51.1%), and black for fear (40.7%). Happiness led with the highest saturation (68.52%) and brightness (75.44%) percentages, while fear recorded the lowest in both categories (47.33% saturation, 48.78% brightness). Fear, however, topped in color fill percentage (35.49%), with happiness at the lowest (25.14%). Tangible imagery prevailed (71.43–83.52%), with abstract styles peaking in fear representations (28.57%). Facial expressions were a common element (41.76–49.45%). The study achieved an 81.3% predictive accuracy for anger, higher than the 71.3% overall average. Future research can build on these results by improving technological methods to quantify more aspects of drawing content. Investigating a more comprehensive array of emotions and examining factors influencing emotional drawing styles will further our understanding of visual-emotional communication.

Similar content being viewed by others

Introduction

The art of drawings is a substantial conduit for delving into the rich complexities of human emotions, facilitating unique insights into non-verbal emotional expressions. With the growth of digital technologies, we are afforded broader opportunities for investigating and understanding these expressions, thereby opening new avenues of research. The progression from emotional expression to perception, culminating in inference, is complex and challenging1. I-SKE framework captures the essence of aesthetic experience through the interplay of sensation, knowledge, and emotion, all set in motion by the beholder's intention2. Within the domain of sensation, the connection between color and emotion, which acts as an echo of the theory of arousal and valence3, has been well-studied4,5,6,7,8,9,10,11,12. Beyond color, our study also explores the concepts of space and drawing styles, relating these elements to theories of arousal and valence, as well as to notions of approach and avoidance. By integrating participant-generated visual methodologies13, our study addresses methodological limitations highlighted in previous research. It offers a fresh perspective on the relationships between drawings and emotion, considering the content of emotional expression.

Firstly, previous studies have deepened our insight into the intricate relationship between colors and emotions4,5,6,7,8,9,10,11,12, which mostly echo the theory of arousal and valence14. Previous studies typically offer participants a predefined color range for pairing colors with emotions Earlier research often presents participants with a specific range of colors for associating colors with emotions4,5,6,7,8,9,10,11,12. While this method has its merits, it may constrain the genuine expression of emotions.

Secondly, drawing-emotion awareness and recognition hinge on content. Perceptions can differ when the same color appears on different facial expressions or in varying circumstances15, underscoring the role of contents and individual factors in color-emotion association studies. Thirdly, there are methodological challenges. While drawings offer a creative and insightful method for capturing emotional expressions, their interpretation and qualitative analysis are inherently subjective, limiting research in this area16. Researchers are concentrating on abstract drawings involving color or lines4 and stroke techniques17,18. However, there appears to be a need for studies specifically examining space or drawing styles and their relation to emotion in the literature. This study employs participant-generated visual methodologies13, to bridge the gap in existing literature and enhance authenticity. It treats participants as active 'knowers' rather than passive observers who merely select colors from limited choices.

We aimed to answer the following research questions:

First, did the colors used in drawings vary among the four primary emotions: anger, happiness, sadness, and fear? How were they associated with the theory of arousal and valence?

Second, was the concept of space in drawings related to the theory of approach and avoidance of space?

Third, we sought to classify the depicting styles to determine if they differed by emotions.

Fourth, how did these variables predict the four emotions?

This study involved 182 university students creating drawings to express four primary emotions: anger, happiness, sadness, and fear. Utilizing Python and OpenCV, this study analyzed these digital drawings by converting previously human-perceivable subtleties into quantifiable data. This utilization of digital technology enhances our understanding of non-verbal emotional expressions.

Literature review

Drawings and emotion

Previous research suggests that the act of drawings can serve as a medium for the disclosure of emotional expressions17,19,20. Drawings encompass a variety of elements such as color, comparison, content, etc. Overall, research analyzing drawings to uncover emotions follows two main directions: The first approach involves quantitative methods focusing on color-pairing. In contrast, the second employs qualitative or mixed methods relying on human judgment.

For decades, the nexus between color and emotion has garnered academic attention. One study highlighted the significant emotional effects of saturation and brightness11. Preferred hues included blue, red–purple, and blue-green, whereas yellow was less favored. Another study indicated that primary hues, notably green, triggered positive emotions, which evoked relaxation8. Achromatic shades saw white as the frontrunner in positivity. Lastly, a recent study revealed that saturated, bright colors resonated with heightened arousal, with a notable arousal gradient from blue to red21.

Research on emotion-color associations primarily occurs in controlled settings. These investigations often use emotional words5 or specific colors9,12,21 to deduce emotional links. One study established the foundation by identifying a low-to-moderate consensus on color-emotion pairings4. Subsequent investigations by Jonauskaite et al.22 delved deeper, notably in 2019, linking hues, lightness, and chroma to specific emotions yellow to joy, yellow-green to relaxation, and lighter shades to positivity. This pivotal shift, primarily concerning young adults, underscored age's impact on color-emotion perception. Their landmark 2023 study (n = 7393) spanning 31 countries revealed age-related nuances in color-emotion associations, demonstrating a universal yet age-distinct connection; older adults showed more positive, specific color responses, contrasting with adolescents' less positive bias7.

Reynolds et al. measured heart drawings using image analysis software correlated with anxiety and depression23. Participants paper drawings were scanned and analyzed based on the area and height. Their findings indicated that larger hearts were associated with increased heart-specific anxiety and negative perceptions of illness among heart failure patients. One study began by employing content analysis on 314 paper drawings, accompanied by explanations from the children or notes by their teachers24. The researcher examined symbols and colors and classified them into six fear categories. Results identified prevalent emotions and fears, with animal-related fears being the most common. One recent study trained raters to evaluate 17 formal elements in artwork, including color, composition, and space20. The study found significant correlations between the combination of movement, dynamic, and contour in artwork and clients' mental health. Another qualitative study with 132 college students, using graphic elicitation to delve into happiness in leisure activities25. The research combined participants' drawings and spoken explanations and found a strong link between happiness and leisure aspects like time, space, and activities. Damiano et al. quantitatively decoded emotional expressions in abstract color and line drawings4. They noted that color drawings by non-artists expressed emotions more clearly than those by artists. Previous investigations into emotions through drawing analysis have advanced slowly and sparingly. However, the impact of depiction style, including abstract versus tangible expressions or spatial elements, still needs to be explored. While drawing content analysis often relies on subjective judgments by human raters, computers are generally employed to examine objective attributes such as color, size, and line characteristics.

While the experimental limitations of these studies may restrict their real-world applicability, employing qualitative methods for analyzing drawings allows for a nuanced capture of subjective emotional expressions. Using mixed methods in drawings analysis could offer a more comprehensive understanding of emotional experiences.

Psychological theoretical framework for emotional expression

Emotions combine physiological and cognitive elements, influencing behavior. Scholars differ on basic emotions. Ekman and Davidson26 list happiness, anger, fear, sadness, disgust, surprise, and contempt, while Tomkins27 includes interest, enjoyment, surprise, distress, fear, anger, etc. Alternatively, some researchers hierarchically organize emotions, categorizing them as positive or negative and further subdividing them into more specific subcategories introduced the circumplex model of affect, which posits that all affective states are determined by two distinct dimensions: valence and arousal3,28. According to this model, emotions can be categorized based on their position along these dimensions. For instance, happiness is associated with positive valence and high arousal; sadness is linked to negative valence and low arousal; anger and fear are typically associated with negative valence and high arousal.

According to Schachter and Singer14, the experience and recognition of emotions depend on general physical arousal and the interpretation of that arousal based on environmental cues, emphasizing the cognitive process known as appraisal. Appraisal theories of emotion, well-established in psychology, shed light on individual variations in emotions and how a broad range of emotions arise29. Consequently, emotions are not directly triggered by objective features or events in the world but rather through an indirect inference.

The universality of emotions is evident in basic emotional expressions26. However, how these emotions are expressed, especially in digital communication, reflects broader cultural shifts. Building on this, Liao10 found that the impact of color on the affective interpretation of emoticons significantly enriches our understanding of emotional expression in digital forms, showing that both the affective tone of an emoticon and its background colors can significantly influence its emotional potency in computer-mediated exchanges. Emojis dominate modern communication, especially among Gen Z and Millennials30. The research underscores their rapid processing of words31 and prominence in virtual workplaces32. This shift, influenced by cultural transitions, could reshape emotional expression patterns in digital communication.

Emerging technologies and the advancement of emotion recognition

Advanced technology is propelling emotion recognition research, simplifying both data collection and the process of emotion identification. These methods are unobtrusive, causing minimal disruption to respondents. Jonauskaite et al. developed a color picker program that allowed 88 participants to select their most and least preferred colors in different contexts, revealing precise color ranges and preferences based on personal experiences33. One study evaluated the emotions of 129 subjects through writing and drawing, emphasizing the importance of timing and ductus in strokes34. They used Random Forest classifiers for feature analysis, finding depression writing accuracy at 67.8%, drawing accuracy at 71.6%, and a combined accuracy of 71.2%. Another study investigated the attributes of drawing strokes with positional pens technology, suggesting that these strokes could offer insights into a user's cognitive state18. One recent study utilized the Emothaw database to detect depression, anxiety, and stress from handwriting and drawing, achieving an average accuracy of 71.06% for depression, 57.93% for anxiety, and 56.93% for stress by employing the leave-one-out technique with a radial basis SVM model16. Another recent study analyzed handwriting and drawing in 49 varied mental health status participants35. Data were collected using an INTUOS WACOM series of four digital tablets and categorized into pressure, ductus, time, space, and inclination, with stroke counts varying significantly between groups, correlating with depression severity. Damiano et al. utilized computational methods to analyze emotions in drawings, comparing them to averaged reference sets per emotion category and employing histograms of contour features for line drawings4. This approach effectively predicted emotions by matching drawings to emotion-specific statistical profiles, demonstrating the technique's accuracy in emotion recognition.

Collectively, these studies underscore the transformative impact of technology on emotion recognition research. From analyzing color-emotion associations to handwriting and drawing, emerging technologies have broadened the horizon for understanding complex emotional states.

Methods

Data collection

Initially, we received samples from 202 participants, which were reduced to 182 after removing unreadable or incorrect images. However, 67 participants opted out (i.e., they requested data destruction post-study and refused data sharing for further research). Accordingly, the drawings from 115 participants totaling 460 images and the secondary data from all 182 samples are provided for interested researchers.

All participants were students from elective courses. Additionally, we have explicitly confirmed that all participants were screened for color vision deficiencies, with no such conditions found among the students. Participants received a blank digital JPG file on their smartphones to create drawings depicting four emotions. Participants were instructed to modify the blank JPG using their phone's built-in editing tools or preferred software.

The instruction was: "Draw how you perceive anger, happiness, sadness, and fear. Each emotion should be drawn separately on individual documents; do not combine all emotions into one document. Please ensure that each emotion is depicted on its distinct page. Artistic skills are not essential; simple sketches will do. Please finish within 30 min. We are interested in your expression of emotions in drawing." Participants penned a reflection on their creation, elucidating their rationale. All signed a written "Informed Consent Form," with opt-outs being excluded. While the study included identifiable information, it was exclusively for internal linkage and was anonymized prior to analysis. The research received approval from the Institutional Review Board of National Cheng Kung University Hospital (B-ER-109–424). The relevant guidelines and regulations performed all methods.

Image processing and measurements

Color of image processing and recognition

To analyze image color profiles, we leveraged the strengths of Python software (version 3.10)36 and OpenCV (version 4.8.0.74)37. Python is a widely used high-level programming language known for its readability and flexibility, ideal for our data analysis needs. OpenCV is a comprehensive library that provides numerous image processing and computer vision algorithms. In our project, we synergistically harnessed these tools: within Python's versatile environment, we utilized OpenCV to process images, convert them into appropriate color spaces, and isolate individual color channels, enabling an accurate quantification of the color information. We first determined the color definitions in the HSV color space using RGB numbers. Previous studies utilized the Munsell color system and other perceptually uniform spaces like Lab, Lch, and xyY, which more accurately reflect human perception9,12, emphasizing the significance of employing perceptually uniform color spaces for a more accurate representation of color in digital contexts, as further supported by recent studies38,39.

The HSV color space offers a perceptually intuitive color model over RGB, emphasizing hue, saturation, and brightness40. Hue (H) varies from 0 to 360 degrees or 0–1, representing color on a wheel, while Saturation (S) and Value (V) quantify intensity and brightness on a 0–100% scale. However, for ease in digital graphics, HSV values are often normalized to 0–255, fitting 8-bit color channels. In Python and OpenCV, Hue is adjusted to 0–180, with S and V maintained at 0–255. This normalization aids in standardizing color representation across software. We detail both the general HSV color system ranges and their specific OpenCV adaptations in Table 1, focusing here exclusively on the OpenCV configuration for clarity.

To analyze color profiles in images, we defined six key variables. The operational definitions for these variables are provided below.

-

(1)

Prominent color: this variable identifies the primary color associated with each emotion. For instance, if red is determined to be the prominent color for an emotion, it is coded as "1"; all other colors are coded as "0".

-

(2)

Color Percentage: this quantifies the extent to which color fills an image representing a specific emotion, calculated as the percentage of the image's pixels of the specified color versus the total pixels.

-

(3)

Number of colors used: seven colors were utilized in the digital canvas for expressive purposes, excluding white, as it represented a blank canvas.

-

(4)

Saturation percentage: saturation measures color intensity within the HSV model, ranging from 0 to 100%. To calculate the saturation percentage, the average component from the HSV color space is used40,41.

-

(5)

Brightness percentage: it represents the perceived intensity or luminance of a color, indicating how much it deviates from a neutral color of the same saturation, such as a gray shade with an equivalent value42.

-

(6)

Color fill percentage: the proportion of the canvas occupied by color.

-

(7)

Image coverage percentage: the proportion of the subject's canvas, including any internal blank spaces.

Types of emotional depictions

Since we are interested in the styles participants drew, their images varied widely from realistic to abstract forms, including free-flowing lines. However, drawing inconsistencies makes these styles difficult for programming and learning algorithms to recognize and quantify. Three research assistants categorized content and depiction styles. After refining the categorization criteria over four iterations, the Kappa statistic showed that their agreement ranged between 85 and 92%.

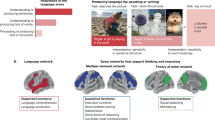

Respondents' drawings representing four emotions were categorized as tangible (Fig. 1) or abstract (Fig. 2). The operational definitions for each are as follows:

Tangible emotion depictions (1) Facial expressions: drawings primarily focused on faces expressing emotions. (2) Body language: drawings focused on body postures or movements expres90 sing emotions. (3) Symbolic representation: drawings that only use symbols to represent emotions. (4) Narrative illustration: drawings that tell a story or situation leading to the depicted emotion.

Abstract emotion depictions Ambiguous Expressions: drawings where the depiction of emotion is abstract or non-obvious.

Data analyses

All analyses were performed using SPSS version 2743. Descriptive statistics provided insights into participants' characteristics and highlighted themes in drawings conveying four emotions. We delved deeper using inferential methods. Chi-square and ANOVA tests were utilized to assess differences in expressions of emotion within drawings. Multinomial logistic regression was used to classify emotions in drawings using variables like prominent colors, saturation, brightness, color count, fill, coverage, and five depiction styles (as shown in Figs. 1 and 2). This method employs an iterative maximum-likelihood algorithm for parameter estimation and excels at categorizing subjects using predictor variables44. To ensure the reliability and accuracy of our model, we reinforced it using bootstrapping with 5000 resamples, a strategy rooted in statistics45. Additionally, we utilized dummy variables for color percentages to avoid the collinearity issue. The model's precision was gauged with an accuracy metric, and a classification table juxtaposed predicted and actual emotional labels, reflecting model efficacy46.

Results

Comparison of the drawings across different emotions

Table 2 details the demographics of 182 study participants, with an average age of 23.07 (SD = 5.40). Gender distribution includes 106 females (58.2%) and 76 males (41.8%). The majority are undergraduates (86.8%), with fields of study primarily in Medicine (60.4%), followed by Social Science (20.9%), Science (7.1%), Liberal Arts (6.6%), and Engineering (4.9%).

Figure 3 presents the prominent color chosen for each emotion, the majority of participants chose red for anger (73.11%), yellow for happiness (47.8%), blue for sadness (51.2%), and black for fear (40.7%). Table 3 displays the significant differences in prominent color choices, color usage, color-filled percentage, brightness, and saturation across four emotions (Chi square = 664.882, p < 0.001), with no significant differences in the number of colors used and image coverage. Based on the average usage percentages of colors in images, the most commonly used color for the emotion of anger was red (72.27%). Happiness had yellow (49.9%) as the predominant choice. Sadness was typically depicted using blue (51.66%). Fear's frequently selected colors included black (33.92%). Happiness had the highest saturation percentage at 68.52%, while fear had the lowest at 47.33%. Happiness had the highest percentage of brightness (75.44%), while fear had the lowest brightness (48.78%). Fear had the highest color fill percentage of the four emotions at 35.49%, while happiness recorded the lowest at 25.14%.

Table 4 classifies respondents' emotional depictions into two categories across four emotions. Non-abstract styles dominated at 71–84%, while abstract styles peaked for fear (28.57%) and dipped for happiness (16.48%). Significant differences were observed among the four tangible emotion styles (p < 0.05 ~ 0.001), except for facial expressions (p = 0.47). Tangible categories, such as facial expressions and symbolic representations, were dominant, ranging from 49.5% (anger) to 41.76% (fear) and from 47.8% (anger) to 24.18% (fear), respectively. Anger topped both categories. Fear stood out uniquely among the four types of emotional depictions, attaining the highest rankings in body language (22.53%), narrative illustration (14.29%), and abstract expression (28.57%).

The overall percentage of prediction rates from multinomial logistic regression was 71.3% (Table 5). Among the emotions, anger had the highest prediction accuracy at 81.3%, followed by sadness at 71.4%, fear at 67.6%, and happiness at 64.8%. Our model exhibits a good fit, with a Pseudo R-Square (Nagelkerke) value of 0.749 and a Chi-Square value of 882.500 (p < 0.001).

Discussion

Our investigation delved into the arena of non-verbal emotional depictions via digital participant drawings. Our findings indicate that color choices in the drawings align closely with those reported in previous studies4,5,6,7,8,9,10,11,12: red was the dominant color for anger (73.1%), yellow for happiness (47.8%), blue for sadness (51.1%), and black for fear (40.7%). Fear had the most color fill at 35.95%, and fear had the smallest image coverage at 84.63%. Non-abstract styles dominated at 74–85%, while abstract styles peaked for fear (28.5%) and dipped for happiness (16.5%). The model predicts emotions with 71.3% average accuracy, excelling in anger prediction at 81.3%

Bridging valence-arousal and approach-avoidance theories

As elaborated, the theoretical constructs of valence and arousal offer a nuanced lens for dissecting the intricacies of emotional experience3,47. These constructs categorize emotions based on their hedonic tone and level of physiological arousal, thereby providing a foundational scaffold upon which our empirical findings can be mapped and interpreted. Our study reflects this model: red (73.1% in anger drawings) aligns with high arousal and negative valence, indicative of anger intensity. Yellow (47.8% in happiness drawings) varies in arousal but is positively valenced, signifying joy. Blue (51.1% in sadness drawings) shows low arousal and negative valence, depicting melancholy. Black (40.7% in fear drawings) suggests moderate arousal and negative valence, akin to fear's uncertainty and anxiety.

Our results also resonate with previous studies12,21, who linked saturated colors to higher arousal. In our study, happiness exhibited the highest saturation at 68.52%, contrasting with fear's lower saturation (47.33%), which paradoxically hinted at heightened arousal and avoidance behavior typically associated with fear. Moreover, the presence of 75.44% high brightness in drawings associated with happiness indicates a positive valence. This observation is supported by Lin et al.48, who demonstrated that the perception of colored photos changes with valence and saturation. Thus, our study underscores the complex role of color attributes in emotional representation and affective judgment, providing practical insights into the connection between color use in drawings and emotional states as defined by arousal and valence.

Our study observed intriguing contrasts in color fill percentages associated with different emotions. 'Fear' occupied 35.49% of the color fill, possibly as a therapeutic outlet for emotional relief49. In contrast, 'Happiness' represented only 25.14%, indicating a lesser need for expression. Our findings, interpreted through the approach-avoidance theory, reveal nuanced emotional narratives. Avoidance motivations, reflected in 'Fear's' vivid coloration, heighten alertness. In contrast, 'Happiness,' associated with approach motivations, appears more subdued, subtly inviting positive experiences that align with theories where color variations embody distinct emotional motivations.

Depiction styles: tangible vs. abstract

Our data exhibits a clear preference for tangible emotional illustration styles. Extensive evidence supports the universality of facial expressions as indicators of emotional states50,51. Our study corroborated this, revealing that many participants opted for tangible representations, predominantly through facial expressions and symbols. The prevalence of anger, attributed to its intensity and explicit nature, dominates the tangible emotional depictions. Our findings echo one study that emphasized the pivotal role of facial expressions in emotion conveyance, noting that masks reduce recognition by 31%, except for anger51. Narrative illustrations were seldom utilized across emotions, with fear at the highest (14.29%), which might be due to their complexity and time demands. They require advanced artistic skills and intricate narrative structures. Fear's relatively higher use implies its compatibility with narratives, aligning with its suspenseful nature. Our study reveals that fear comprises the largest percentage (28.57%) of abstract depictions in our artwork, a finding consistent with the vigilance-avoidance hypothesis52. This hypothesis offers a nuanced perspective on fear. It highlights its prevalence in abstract expressions and suggests that socially anxious individuals may initially be drawn to fear stimuli before eventually avoiding them, potentially internalizing the emotion. Furthermore, one recent study53 link uncertainty to negative emotions like fear and anxiety, implying that the abstract nature of fear in our artwork conveys emotional complexity and uncertainty. Again, this aligns with the perspective that fear often manifests internally and is less overt, further emphasizing its intricate relationship with uncertainty54.

Communication style

As social media continues to reshape our digital landscape, the articulation of emotions mirrors profound cultural transformations. In the tangible categories of emotional portrayal, facial expressions ranged from 49.45% (anger) to 41.76% (fear), as illustrated in Table 4. Our study, involving predominantly college students, aligns with current digital communication trends. The Adobe 2022 U.S. Emoji Trend Report emphasizes the extensive use of emojis, particularly among Gen Z and Millennials30. Facial or emoji stimuli process more rapidly than words, highlighting the efficiency of visual symbols in emotional communication31. Another recent study indicates that emojis elicit high arousal and that discrete emotions are most recognizable in emojis, followed by human faces and emoticons55. An overwhelming 91% of respondents use emojis to lighten conversations, and 69% prefer expressing emotions through emojis over text-only conversations30. Notably, post-COVID-19, more people have become accustomed to virtual workplaces, with collaborators increasingly using non-textual responses to cultivate interpersonal bonds in informal settings32. This shift influenced how respondents visually expressed emotions, mirroring the emojis or stickers they frequently used. However, despite these correlations, significant limitations must be acknowledged. Emotional expression through simplified facial representations, akin to emojis, might restrain creative expression, potentially diminishing the complexity of emotional communication. While these methods enable quick communication, they may need more depth, potentially failing to serve more nuanced forms of emotional expression adequately. Moreover, ubiquitous representations may homogenize emotional expression, compromising individual uniqueness due to their inherent socialization.

Prediction

In our study, we implemented multinomial logistic regression to classify emotions, achieving an average prediction accuracy of 71.3% across all emotions, with a notable 81.3% accuracy in predicting anger. Our model uniquely incorporated various factors, including saturation, brightness, image coverage, color fill, and depiction style, distinguishing it from traditional studies focusing mainly on color-emotion associations. Comparatively, one study assessed emotions through writing and drawing, utilizing Random Forest classifiers to report a 71.6% accuracy in detecting depression from drawings34. Similarly, Nolazco-Flores et al. used handwriting and drawing analyses from the Emothaw database, employing a radial basis SVM model to achieve varying accuracies for depression, anxiety, and stress16. Another study35 investigated stroke count variations in handwriting and drawing tasks among different depression severity groups. Distinctively, our study's drawings were participant-generated, allowing a broader spectrum of emotional expression through free drawing as opposed to the specific objects like trees or houses often used in other studies. This approach resulted in a richer variety of indicators for emotion prediction. Future research can enhance emotion prediction techniques by building on our methodology of using diverse, participant-generated drawings for more comprehensive nonverbal analyses.

Implications

First, our drawing-based approach, akin to art psychotherapy, offers a transformative and empathetic method for emotion exploration, avoiding the invasiveness of traditional questionnaires. It creates a relaxing environment, circumventing the discomfort of direct questioning. These insights demonstrate the potential of drawing as an alternative method for detecting and engaging with participants' mental challenges and thought processes, and this approach holds promise for application in both practice and education. Second, leveraging Python and OpenCV, we transformed qualitative images into quantitative data, offering a more in-depth analysis of emotional representations. Our findings show an 81.3% accuracy in predicting anger, significantly higher than the 71.3% average for other emotions, indicating more intricate emotion-color perceptions. Thirdly, our study breaks new ground in color-emotion research by using images created by participants, shifting from the traditional approach of predefined color-emotion pairings. This method recognizes respondents as active contributors, enriching our understanding of how viewers interpret and express emotions through imagery.

Research limitations and future directions

First, the homogeneity of our participants, all university students, narrows our findings' scope and applicability to a broader audience. However, this homogeneity also strengthens, offering a focused exploration of this demographic's emotional and color perceptions. It enables a detailed analysis of patterns, like the gender-specific use of yellow in expressing sadness, providing valuable insights into the emotional nuances of young adults in academia. Secondly, the diverse and intricate drawing styles, especially free-style representations, posed challenges, leading us to lean more on human judgments. Programming improvements might consider hybrid models that combine machine learning with heuristic or semantic analyses. Additional strategies worth exploring include feedback loops, deeper integration of advanced learning algorithms, and enriching the training data pool. Thirdly, the majority of participants preferred tangible representations for expressing emotions. Influences such as drawing habits, recent emotional states, artistic backgrounds, or personality traits could have played a significant role in their choices. Future research should focus on exploring these potential influencing factors.

Conclusions

In conclusion, our study marks a notable advancement in image-emotion analysis using Python and OpenCV, converting subtle human perceptions into measurable data. The use of drawings as a non-invasive method has proven valuable in both research and practical applications, highlighting its relevance in psychological assessment and educational practices.

Data availability

This data has been coded and permanently de-identified. The datasets generated and/or analyzed during the current study are available in the OSF repository at. https://osf.io/4wdgv/?view_only=99ebdb13e1d54b94be3094d64add136d.

References

Lange, J., Heerdink, M. W. & van Kleef, G. A. Reading emotions, reading people: emotion perception and inferences drawn from perceived emotions. Curr. Opin. Psychol. 43, 85–90. https://doi.org/10.1016/j.copsyc.2021.06.008 (2022).

Shimamura, A. P. & Palmer, S. E. Aesthetic science: Connecting minds, brains, and experience 3–28 (Oxford University Press Inc, Oxford, 2012).

Posner, J., Russell, J. A. & Peterson, B. S. The circumplex model of affect: an integrative approach to affective neuroscience, cognitive development, and psychopathology. Dev. Psychopathol. 17, 715–734. https://doi.org/10.1017/S0954579405050340 (2005).

Damiano, C. et al. Anger is red, sadness is blue: Emotion depictions in abstract visual art by artists and non-artists. J. Vis. 23, 1–1. https://doi.org/10.1167/JOV.23.4.1 (2023).

Fugate, J. M. B. & Franco, C. L. What color is your anger? Assessing color-emotion pairings in english speakers. Front. Psychol. 10, 206. https://doi.org/10.3389/fpsyg.2019.00206 (2019).

Jonauskaite, D. et al. Universal patterns in color-emotion associations are further shaped by linguistic and geographic proximity. Psychol. Sci. 31, 1245–1260. https://doi.org/10.1177/0956797620948810 (2020).

Jonauskaite, D. et al. A comparative analysis of colour–emotion associations in 16–88-year-old adults from 31 countries. Br. J. Psychol. https://doi.org/10.1111/BJOP.12687 (2023).

Jonauskaite, D. et al. A machine learning approach to quantify the specificity of colour–emotion associations and their cultural differences. Royal Soc. Open Sci. https://doi.org/10.1098/RSOS.190741 (2019).

Kaya, N. & Epps, H. H. Relationship between color and emotion: A study of college students. Coll. Student J. 38, 396–405 (2004).

Liao, S., Sakata, K. & Paramei, G. V. Color affects recognition of emoticon expressions. i-Perception https://doi.org/10.1177/20416695221080778 (2022).

Sutton, T. M. & Altarriba, J. Finding the positive in all of the negative: Facilitation for color-related emotion words in a negative priming paradigm. Acta Psychol. 170, 84–93. https://doi.org/10.1016/j.actpsy.2016.06.012 (2016).

Valdez, P. & Mehrabian, A. Effects of color on emotions. J. Exp. Psychol. General 123, 394–409. https://doi.org/10.1037/0096-3445.123.4.394 (1994).

Guillemin, M. & Drew, S. Questions of process in participant-generated visual methodologies. Visual studies 25, 175–188 (2010).

Schachter, S. & Singer, J. Cognitive, social, and physiological determinants of emotional state. Psychol. Rev. 69, 379–399. https://doi.org/10.1037/H0046234 (1962).

Thorstenson, C. A., Pazda, A. D., Young, S. G. & Elliot, A. J. Face color facilitates the disambiguation of confusing emotion expressions: toward a social functional account of face color in emotion communication. Emotion 19, 799 (2019).

Nolazco-Flores, J. A., Faundez-Zanuy, M., Velazquez-Flores, O. A., Cordasco, G. & Esposito, A. Emotional state recognition performance improvement on a handwriting and drawing task. IEEE Access 9, 28496–28504. https://doi.org/10.1109/ACCESS.2021.3058443 (2021).

Brailas, A. Using drawings in qualitative interviews: An introduction to the practice. The Qual. Rep. 25, 4447–4460. https://doi.org/10.46743/2160-3715/2020.4585 (2020).

Prange, A., Barz, M. & Sonntag, D. A categorisation and implementation of digital pen features for behaviour characterisation. https://arxiv.org/abs/1810.03970 (2018).

Pénzes, I., van Hooren, S., Dokter, D. & Hutschemaekers, G. How art therapists observe mental health using formal elements in art products: structure and variation as indicators for balance and adaptability. Front. Psychol. 9, 391356 (2018).

Pénzes, I., van Hooren, S., Dokter, D. & Hutschemaekers, G. Formal elements of art products indicate aspects of mental health. Front. Psychol. 11, 572700–572700. https://doi.org/10.3389/FPSYG.2020.572700/BIBTEX (2020).

Wilms, L. & Oberfeld, D. Color and emotion: effects of hue, saturation, and brightness. Psychol. Res. 82, 896–914. https://doi.org/10.1007/S00426-017-0880-8 (2018).

Jonauskaite, D., Althaus, B., Dael, N., Dan-Glauser, E. & Mohr, C. What color do you feel? Color choices are driven by mood. Color Res. Appl. 44, 272–284. https://doi.org/10.1002/COL.22327 (2019).

Reynolds, L., Broadbent, E., Ellis, C. J., Gamble, G. & Petrie, K. J. Patients drawings illustrate psychological and functional status in heart failure. J. Psychosom. Res. 63, 525–532. https://doi.org/10.1016/J.JPSYCHORES.2007.03.007 (2007).

Talu, E. Reflections of fears of children to drawings. Eur. J. Edu. Res. 8, 763–779. https://doi.org/10.12973/EU-JER.8.3.763 (2019).

Liu, H. & Da, S. The relationships between leisure and happiness-A graphic elicitation method. Leisure Stud. 39, 111–130. https://doi.org/10.1080/02614367.2019.1575459 (2020).

Ekman, P. & Davidson, R. J. The nature of emotion: Fundamental questions (Oxford University Press, Oxford, 1994).

Tomkins, S. Affect imagery consciousness. The positive affects (Springer, Berlin, 1962).

Fischer, K. W., Shaver, P. R. & Carnochan, P. How emotions develop and how they organise development. Cognit. Emotion 4, 81–127. https://doi.org/10.1080/02699939008407142 (1990).

Lazarus, R. S. Emotion and adaptation (Oxford University Press, Oxford, 1991).

Adobe. Adobes Future of Creativity: 2022 U.S. Emoji Trend Report, <https://blog.adobe.com/en/publish/2022/09/13/emoji-trend-report-2022> (2022).

Kaye, L. K. et al. How emotional are emoji?: Exploring the effect of emotional valence on the processing of emoji stimuli. Comput. Human Behav. 116, 106648. https://doi.org/10.1016/j.chb.2020.106648 (2021).

Shandilya, E., Fan, M. & Tigwell, G. W. I need to be professional until my new team uses emoji, GIFs, or memes first': New collaborators' perspectives on using non-textual communication in virtual workspaces. In: Conference on Human Factors in Computing Systems - Proceedings, (2022). https://doi.org/10.1145/3491102.3517514

Jonauskaite, D. et al. Most and least preferred colours differ according to object context: new insights from an unrestricted colour range. PLOS ONE 11, e0152194–e0152194. https://doi.org/10.1371/JOURNAL.PONE.0152194 (2016).

Likforman-Sulem, L., Esposito, A., Faundez-Zanuy, M., Clemencon, S. & Cordasco, G. EMOTHAW: A novel database for emotional state recognition from handwriting and drawing. IEEE Trans. Human-Machine Syst. 47, 273–284. https://doi.org/10.1109/THMS.2016.2635441 (2017).

Raimo, G. et al. Handwriting and drawing for depression detection: a preliminary study. In Applied intelligence and informatics (eds Mahmud, M. et al.) 320–332 (Springer, Berlin, 2022).

Python Software Foundation. The Python Language Reference v. 3.10 https://docs.python.org/3.10/reference/index.html (2021).

OpenCV. OpenCV-Python Tutorials v. 4.8.0.74 https://docs.opencv.org/4.8.0/d6/d00/tutorial_py_root.html (2021).

Albers, A. M., Gegenfurtner, K. R. & Nascimento, S. M. C. An independent contribution of colour to the aesthetic preference for paintings. Vision Res. 177, 109–117. https://doi.org/10.1016/J.VISRES.2020.08.005 (2020).

Nascimento, S. M. C. et al. The colors of paintings and viewers preferences. Vision Res. 130, 76–84. https://doi.org/10.1016/J.VISRES.2016.11.006 (2017).

Inoue, K., Jiang, M. & Hara, K. (2021) Hue-preserving saturation improvement in RGB color cube. J. Imaging 7, 150–150. https://doi.org/10.3390/JIMAGING7080150 (2021).

Zhou, D., He, G., Xu, K. & Liu, C. A two-stage hue-preserving and saturation improvement color image enhancement algorithm without gamut problem. IET Image Proc. 17, 24–31. https://doi.org/10.1049/IPR2.12613 (2023).

Juhee, K. & Hyeon-Jeong, S. Prediction of the emotion responses to poster designs based on graphical features: A machine learning-driven approach. Arch. Des. Res. 33, 39–55. https://doi.org/10.15187/adr.2020.05.33.2.39 (2020).

IBM. SPSS Statistics 27.0.0 v. 27 https://www.ibm.com/docs/en/spss-statistics/27.0.0 (2021).

IBM. SPSS Statistics Multinomial Logistic Regression. https://www.ibm.com/docs/en/spss-statistics/29.0.0?topic=regression-multinomial-logistic (2024).

MacKinnon, J. G. Bootstrap inference in econometrics. Can. J. Econ. Revue canadienne d Econ. 35, 615–645 (2002).

Vrigazova, B. P. & Ivanov, I. G. The bootstrap procedure in classification problems. Int. J. Data Mining Modell. Manag. 12, 428–446. https://doi.org/10.1504/IJDMMM.2020.111400 (2020).

Russell, J. A. A circumplex model of affect. J. Personal. Soc. Psychol. 39, 1161–1178. https://doi.org/10.1037/h0077714 (1980).

Lin, C., Mottaghi, S. & Shams, L. The effects of color and saturation on the enjoyment of real-life images. Psychon. Bull. Rev. 1, 1–12. https://doi.org/10.3758/S13423-023-02357-4/FIGURES/6 (2023).

Elliot, A. J. & Maier, M. A. Color and psychological functioning. Curr. Dir. Psychol. Sci. 16(5), 250–254. https://doi.org/10.1111/J.1467-8721.2007.00514.X (2007).

Ekman, P. & Friesen, W. V. Constants across cultures in the face and emotion. J. Personal. Soc. Psychol. 17, 124–129. https://doi.org/10.1037/H0030377 (1971).

Proverbio, A. M. & Cerri, A. The recognition of facial expressions under surgical masks: The primacy of anger. Front. Neurosci. 16, 864490–864490. https://doi.org/10.3389/FNINS.2022.864490/BIBTEX (2022).

Reichenberger, J., Pfaller, M. & Mühlberger, A. Gaze behavior in social fear conditioning: An eye-tracking study in virtual reality. Front. Psychol. 11, 504052–504052. https://doi.org/10.3389/FPSYG.2020.00035/BIBTEX (2020).

Morriss, J., Tupitsa, E., Dodd, H. F. & Hirsch, C. R. Uncertainty makes me emotional: Uncertainty as an elicitor and modulator of emotional states. Front. Psychol. 13, 777025–777025. https://doi.org/10.3389/FPSYG.2022.777025/BIBTEX (2022).

Dael, N., Mortillaro, M. & Scherer, K. R. Emotion expression in body action and posture. Emotion 12, 1085–1101. https://doi.org/10.1037/A0025737 (2012).

Fischer, B. & Herbert, C. Emoji as affective symbols: Affective judgments of emoji, emoticons, and human faces varying in emotional content. Front. Psychol. 12, 645173–645173. https://doi.org/10.3389/FPSYG.2021.645173/BIBTEX (2021).

Funding

This research was supported in part by the Higher Education Sprout Project, Ministry of Education to the Headquarters of University Advancement at National Cheng Kung University (NCKU).

Author information

Authors and Affiliations

Contributions

H.C.W: Conceptualization, Methodology, Data curation, Writing-Original draft preparation, Visualization, Validation, Writing-Reviewing and Editing, Project Administration. L.Y.H: Methodology, Data curation, Validation, Formal analysis, Writing-Reviewing and Editing. L.I.: Methodology, Data curation, Software, Validation, Formal analysis. P.C.H.: Conceptualization, Methodology, Writing-Original draft preparation, Writing-Reviewing. C.T.Y.: Conceptualization, Validation, Writing-Reviewing and Editing, Project Administration. C.Y.L.: Conceptualization, Methodology, Reviewing and Editing. P.H.L.: Methodology, Software, Validation, Formal analysis.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher's note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Weng, HC., Huang, LY., Imcha, L. et al. Drawing as a window to emotion with insights from tech-transformed participant images. Sci Rep 14, 11571 (2024). https://doi.org/10.1038/s41598-024-60532-6

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41598-024-60532-6

Comments

By submitting a comment you agree to abide by our Terms and Community Guidelines. If you find something abusive or that does not comply with our terms or guidelines please flag it as inappropriate.