Abstract

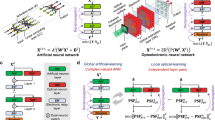

Artificial intelligence now advances by performing twice as many floating-point multiplications every two months, but the semiconductor industry tiles twice as many multipliers on a chip every two years. Moreover, the returns from tiling these multipliers ever more densely now diminish because signals must travel relatively farther and farther. Although travel can be shortened by stacking tiled multipliers in a three-dimensional chip, such a solution acutely reduces the available surface area for dissipating heat. Here I propose to transcend this three-dimensional thermal constraint by moving away from learning with synapses to learning with dendrites. Synaptic inputs are not weighted precisely but rather ordered meticulously along a short stretch of dendrite, termed dendrocentric learning. With the help of a computational model of a dendrite and a conceptual model of a ferroelectric device that emulates it, I illustrate how dendrocentric learning artificial intelligence—or synthetic intelligence for short—could run not with megawatts in the cloud but rather with watts on a smartphone.

This is a preview of subscription content, access via your institution

Access options

Access Nature and 54 other Nature Portfolio journals

Get Nature+, our best-value online-access subscription

$29.99 / 30 days

cancel any time

Subscribe to this journal

Receive 51 print issues and online access

$199.00 per year

only $3.90 per issue

Buy this article

- Purchase on Springer Link

- Instant access to full article PDF

Prices may be subject to local taxes which are calculated during checkout

Similar content being viewed by others

Code availability

The Mathematica notebook used to simulate and analyse the dendrite model is available at https://web.stanford.edu/group/brainsinsilicon/documents/Spiny_Dendrite_Model.nb.

References

Brown, T. et al. Language models are few-shot learners. Adv. Neural Inf. Process. Syst. 33, 1877–1901 (2020).

GPT-3. A robot wrote this article. Are you scared yet, human? The Guardian (2020); https://www.theguardian.com/commentisfree/2020/sep/08/robot-wrote-this-article-gpt-3.

Mehonic, A. & Kenyon, A. Brain-inspired computing needs a master plan. Nature 604, 255–260 (2022).

Dally, W., Turakhia, Y. & Han, S. Domain-specific hardware accelerators. Commun. ACM 63, 48–57 (2020).

Jouppi, N. et al. A domain-specific supercomputer for training deep neural networks. Commun. ACM 63, 67–78 (2020).

Rosenblatt, F. The perceptron—a probabilistic model for information-storage and organization in the brain. Psychol. Rev. 65, 386–408 (1958). This paper introduced the synaptocentric conception of the learning brain.

LeCun, Y., Bengio, Y. & Hinton, G. Deep learning. Nature 521, 436–444 (2015).

Schmidhuber, J. Deep learning in neural networks: an overview. Neural Netw. 61, 85–117 (2015).

Radford, A. et al. Language models are unsupervised multitask learners. OpenAI Blog 1, 9 (2019).

Anthony, L. F. W., Kanding, B. & Selvan, R. Carbontracker: tracking and predicting the carbon footprint of training deep learning models. Preprint at https://arxiv.org/abs/2007.03051 (2020).

Dally, W. J. et al. Hardware-enabled artificial intelligence. In 2018 IEEE Symposium on VLSI Circuits 3–6 (IEEE, 2018).

Goda, A. 3-D NAND technology achievements and future scaling perspectives. IEEE Trans. Electron Devices 67, 1373–1381 (2020).

Pekny, T. et al. A 1-Tb Density 4b/Cell 3D-NAND Flash on 176-Tier Technology with 4-Independent Planes for Read using CMOS-Under-the-Array. In 2022 IEEE International Solid-State Circuits Conference (ISSCC) 1–3 (IEEE, 2022).

Markram, H. et al. Reconstruction and simulation of neocortical microcircuitry. Cell 163, 456–492 (2015).

Park, Y. et al. 3-D stacked synapse array based on charge-trap flash memory for implementation of deep neural networks. IEEE Trans. Electron Devices 66, 420–427 (2019).

Bavandpour, M., Sahay, S., Mahmoodi, M. R. & Strukov, D. B. 3D-aCortex: an ultra-compact energy-efficient neurocomputing platform based on commercial 3D-NAND flash memories. Neuromorph. Comput. Eng. 1, 014001 (2021).

Thorpe, S., Delorme, A. & Van Rullen, R. Spike-based strategies for rapid processing. Neural Netw. 14, 715–725 (2001).

Skaggs, W. E. & McNaughton, B. L. Replay of neuronal firing sequences in rat hippocampus during sleep following spatial experience. Science 271, 1870–1873 (1996).

Wehr, M. & Laurent, G. Odour encoding by temporal sequences of firing in oscillating neural assemblies. Nature 384, 162–166 (1996).

Vaz, A. P., Wittig, J. H., Inati, S. K. & Zaghloul, K. A. Replay of cortical spiking sequences during human memory retrieval. Science 367, 1131–1134 (2020). A specific sequence of spikes encodes memory of an episode in humans and recall involves reinstating this temporal order of activity.

Hanin, B. & Rolnick, D. Deep ReLU networks have surprisingly few activation patterns. In 33rd Conference on Neural Information Processing Systems (NeurIPS, 2019)

Cai, X., Huang, J., Bian, Y. & Church, K. Isotropy in the contextual embedding space: Clusters and manifolds. In International Conference on Learning Representations (ICLR, 2021).

Herculano-Houzel, S. Scaling of brain metabolism with a fixed energy budget per neuron: implications for neuronal activity, plasticity and evolution. PLoS ONE 6, e17514 (2011).

Sterling, P. & Laughlin, S. Principles of Neural Design (MIT, 2015).

Hemberger, M., Shein-Idelson, M., Pammer, L. & Laurent, G. Reliable sequential activation of neural assemblies by single pyramidal cells in a three-layered cortex. Neuron 104, 353–369.e5 (2019).

Ishikawa, T. & Ikegaya, Y. Locally sequential synaptic reactivation during hippocampal ripples. Sci. Adv. https://doi.org/10.1126/sciadv.aay1492 (2020). Neighbouring spines are activated serially along a dendrite, towards or away from the cell body.

Agmonsnir, H. & Segev, I. Signal delay and input synchronization in passive dendritic structures. J. Neurophysiol. 70, 2066–2085 (1993).

Iacobucci, G. & Popescu, G. NMDA receptors: linking physiological output to biophysical operation. Nat. Rev. Neurosci. 18, 236–249 (2017).

Branco, T., Clark, B. & Hausser, M. Dendritic discrimination of temporal input sequences in cortical neurons. Science 329, 1671–1675 (2010).

Matsuzaki, M. et al. Dendritic spine geometry is critical for AMPA receptor expression in hippocampal CA1 pyramidal neurons. Nat. Neurosci. 4, 1086–1092 (2001).

Kerlin, A. et al. Functional clustering of dendritic activity during decision-making. eLife https://doi.org/10.7554/eLife.46966 (2019). Task-associated calcium signals cluster within branches over approximately 10 μm, potentially supporting a large learning capacity in individual neurons.

Shoemaker, P. Neural bistability and amplification mediated by NMDA receptors: analysis of stationary equations. Neurocomputing 74, 3058–3071 (2011).

Major, G., Polsky, A., Denk, W., Schiller, J. & Tank, D. Spatiotemporally graded NMDA spike/plateau potentials in basal dendrites of neocortical pyramidal neurons. J. Neurophysiol. 99, 2584–2601 (2008).

Fino, E. et al. RuBi-glutamate: two-photon and visible-light photoactivation of neurons and dendritic spines. Front. Neural Circuits https://doi.org/10.3389/neuro.04.002.2009 (2009).

Mahowald, M. & Douglas, R. A silicon neuron. Nature 354, 515–518 (1991).

Benjamin, B. et al. Neurogrid: a mixed-analog-digital multichip system for large-scale neural simulations. Proc. IEEE 102, 699–716 (2014).

Hoffmann, M. et al. Unveiling the double-well energy landscape in a ferroelectric layer. Nature 565, 464–467 (2019).

Boescke, T., Muller, J., Brauhaus, D., Schroder, U. & Bottger, U. Ferroelectricity in hafnium oxide thin films. Appl. Phys. Lett. https://doi.org/10.1063/1.3634052 (2011).

Beyer, S. et al. FeFET: A versatile CMOS compatible device with game-changing potential. In 2020 IEEE International Memory Workshop (IMW) 1–4 (IEEE, 2020).

Dally, W. J. & Towles, B. P. Principles and Practices of Interconnection Networks (Elsevier, 2004).

Bi, G. & Poo, M. Synaptic modifications in cultured hippocampal neurons: dependence on spike timing, synaptic strength, and postsynaptic cell type. J. Neurosci. 18, 10464–10472 (1998).

Mead, C. Neuromorphic electronic systems. Proc. IEEE 78, 1629–1636 (1990). This paper introduced neuromorphic computing.

Grollier, J. et al. Neuromorphic spintronics. Nat. Electron. 3, 360–370 (2020).

Leugering, J., Nieters, P. & Pipa, G. A minimal model of neural computation with dendritic plateau potentials. Preprint at bioRxiv https://doi.org/10.1101/690792 (2022).

Beniaguev, D., Segev, I. & London, M. Single cortical neurons as deep artificial neural networks. Neuron 109, 2727–2739.e3 (2021).

Wetzstein, G. et al. Inference in artificial intelligence with deep optics and photonics. Nature 588, 39–47 (2021).

Szatmary, B. & Izhikevich, E. Spike-timing theory of working memory. PLoS Comput. Biol. https://doi.org/10.1371/journal.pcbi.1000879 (2010).

Frady, E. & Sommer, F. Robust computation with rhythmic spike patterns. Proc. Natl Acad. Sci. USA 116, 18050–18059 (2019).

Goltz, J. et al. Fast and energy-efficient neuromorphic deep learning with first-spike times. Nat. Mach. Intell. 3, 823–835 (2021).

Madhavan, A., Sherwood, T. & Strukov, D. Race logic: abusing hardware race conditions to perform useful computation. IEEE Micro 35, 48–57 (2015).

Tzimpragos, G. et al. Temporal computing with superconductors. IEEE Micro 41, 71–79 (2021).

Borgeaud, S. et al. Improving language models by retrieving from trillions of tokens. In Proceedings of the 39th International Conference on Machine Learning (eds Chaudhuri, K. et al.) 2206–2240 (ICML, 2022).

Braun, W. & Memmesheimer, R. M. High-frequency oscillations and sequence generation in two-population models of hippocampal region CA1. PLoS Comput. Biol. 18, e1009891 (2022).

Pulikkottil, V. V., Somashekar, B. P. & Bhalla, U. S. Computation, wiring, and plasticity in synaptic clusters. Curr. Opin. Neurobiol. 70, 101–112 (2021).

Mahowald, M. An Analog VLSI System for Stereoscopic Vision Vol. 265 (Springer Science & Business Media, 1994).

Mead, C. How we created neuromorphic engineering. Nat. Electron. 3, 434–435 (2020).

Henighan, T. et al. Scaling laws for autoregressive generative modeling. Preprint at https://arxiv.org/abs/2010.14701 (2020).

Hoffmann, J. et al. Training compute-optimal large language models. Preprint at https://arxiv.org/abs/2203.15556 (2022).

Diorio, C., Hasler, P., Minch, A. & Mead, C. A. A single-transistor silicon synapse. IEEE Trans. Electron Devices 43, 1972–1980 (1996). An early realization of vector-matrix multiplication inside a 2D memory chip with floating-gate transistors, precursors to the charge-trap transistors that today’s 3D memory chips use.

Boerlin, M., Machens, C. K. & Denève, S. Predictive coding of dynamical variables in balanced spiking networks. PLoS Comput. Biol. 9, e1003258 (2013).

Kulik, A. et al. Compartment-dependent colocalization of Kir3.2-containing K+ channels and GABAB receptors in hippocampal pyramidal cells. J. Neurosci. 26, 4289–4297 (2006).

Kohl, M. M. & Paulsen, O. The roles of GABAB receptors in cortical network activity. Adv. Pharmacol. 58, 205–229 (2010).

Mainen, Z. & Sejnowski, T. Influence of dendritic structure on firing pattern in model neocortical neurons. Nature 382, 363–366 (1996).

Acknowledgements

This work was supported by US Office of Naval Research (grant numbers N000141310419 and N000141512827), US National Science Foundation (grant number 2223827), Stanford Medical Center Development (Discovery Innovation Fund), Stanford Institute for Human-Centered Artificial Intelligence (HAI), C. Reynolds and GrAI Matter Labs. I thank P. Sterling for his help editing the manuscript.

Author information

Authors and Affiliations

Contributions

K.B. conceived, performed and wrote up this work.

Corresponding author

Ethics declarations

Competing interests

K.B. is a co-founder and stockholder of Femtosense Inc. and an advisor to Radical Semiconductor.

Peer review

Peer review information

Nature thanks Dilip Vasudevan and the other, anonymous, reviewer(s) for their contribution to the peer review of this work.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary information

Supplementary Information

This file contains 3 sections: Energy per inference for GPT-3 on Pixel-4; Wiring analysis; and Dendrite model.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Boahen, K. Dendrocentric learning for synthetic intelligence. Nature 612, 43–50 (2022). https://doi.org/10.1038/s41586-022-05340-6

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1038/s41586-022-05340-6

This article is cited by

-

Temporal dendritic heterogeneity incorporated with spiking neural networks for learning multi-timescale dynamics

Nature Communications (2024)

-

DenRAM: neuromorphic dendritic architecture with RRAM for efficient temporal processing with delays

Nature Communications (2024)

-

Organic mixed conductors for bioinspired electronics

Nature Reviews Materials (2023)

-

The physics of optical computing

Nature Reviews Physics (2023)

-

Catalyzing next-generation Artificial Intelligence through NeuroAI

Nature Communications (2023)

Comments

By submitting a comment you agree to abide by our Terms and Community Guidelines. If you find something abusive or that does not comply with our terms or guidelines please flag it as inappropriate.