Abstract

Expectations have a powerful influence on how we experience the world. Neurobiological and computational models of learning suggest that dopamine is crucial for shaping expectations of reward and that expectations alone may influence dopamine levels. However, because expectations and reinforcers are typically manipulated together, the role of expectations per se has remained unclear. We separated these two factors using a placebo dopaminergic manipulation in individuals with Parkinson's disease. We combined a reward learning task with functional magnetic resonance imaging to test how expectations of dopamine release modulate learning-related activity in the brain. We found that the mere expectation of dopamine release enhanced reward learning and modulated learning-related signals in the striatum and the ventromedial prefrontal cortex. These effects were selective to learning from reward: neither medication nor placebo had an effect on learning to avoid monetary loss. These findings suggest a neurobiological mechanism by which expectations shape learning and affect.

This is a preview of subscription content, access via your institution

Access options

Subscribe to this journal

Receive 12 print issues and online access

$209.00 per year

only $17.42 per issue

Buy this article

- Purchase on Springer Link

- Instant access to full article PDF

Prices may be subject to local taxes which are calculated during checkout

Similar content being viewed by others

References

Goetz, C.G. et al. Placebo influences on dyskinesia in Parkinson's disease. Mov. Disord. 23, 700–707 (2008).

Goetz, C.G. et al. Placebo response in Parkinson's disease: comparisons among 11 trials covering medical and surgical interventions. Mov. Disord. 23, 690–699 (2008).

McRae, C. et al. Effects of perceived treatment on quality of life and medical outcomes in a double-blind placebo surgery trial. Arch. Gen. Psychiatry 61, 412–420 (2004).

Benedetti, F. et al. Placebo-responsive Parkinson patients show decreased activity in single neurons of subthalamic nucleus. Nat. Neurosci. 7, 587–588 (2004).

de la Fuente-Fernández, R. et al. Expectation and dopamine release: mechanism of the placebo effect in Parkinson's disease. Science 293, 1164–1166 (2001).

Lidstone, S.C. et al. Effects of expectation on placebo-induced dopamine release in Parkinson disease. Arch. Gen. Psychiatry 67, 857–865 (2010).

Voon, V. et al. Mechanisms underlying dopamine-mediated reward bias in compulsive behaviors. Neuron 65, 135–142 (2010).

de la Fuente-Fernández, R. et al. Dopamine release in human ventral striatum and expectation of reward. Behav. Brain Res. 136, 359–363 (2002).

Schweinhardt, P., Seminowicz, D.A., Jaeger, E., Duncan, G.H. & Bushnell, M.C. The anatomy of the mesolimbic reward system: a link between personality and the placebo analgesic response. J. Neurosci. 29, 4882–4887 (2009).

Zubieta, J.K. & Stohler, C.S. Neurobiological mechanisms of placebo responses. Ann. NY Acad. Sci. 1156, 198–210 (2009).

Pessiglione, M., Seymour, B., Flandin, G., Dolan, R.J. & Frith, C.D. Dopamine-dependent prediction errors underpin reward-seeking behavior in humans. Nature 442, 1042–1045 (2006).

Sutton, R.S. & Barto, A.G. Reinforcement Learning: an Introduction (Bradford Book, 1998).

Barto, A.G. Reinforcement learning and dynamic programming. in Analysis, Design and Evaluation of Man-Machine Systems, Vol. 1,2 (ed. Johannsen, G.) 407–412 (1995).

Daw, N.D. in Trial-by-trial data analysis using computational models. Decision Making, Affect, Learning: Attention and Performance XXIII, Vol. 1 (eds. Delgado, M.R., Phelps, E.A. & Robbins, T.W.) 3–38 (Oxford University Press, 2011).

Daw, N.D., O'Doherty, J.P., Dayan, P., Seymour, B. & Dolan, R.J. Cortical substrates for exploratory decisions in humans. Nature 441, 876–879 (2006).

Hare, T.A., O'Doherty, J., Camerer, C.F., Schultz, W. & Rangel, A. Dissociating the role of the orbitofrontal cortex and the striatum in the computation of goal values and prediction errors. J. Neurosci. 28, 5623–5630 (2008).

Levy, D.J. & Glimcher, P.W. The root of all value: a neural common currency for choice. Curr. Opin. Neurobiol. 22, 1027–1038 (2012).

Plassmann, H., O'Doherty, J.P. & Rangel, A. Appetitive and aversive goal values are encoded in the medial orbitofrontal cortex at the time of decision making. J. Neurosci. 30, 10799–10808 (2010).

McClure, S.M., Berns, G.S. & Montague, P.R. Temporal prediction errors in a passive learning task activate human striatum. Neuron 38, 339–346 (2003).

O'Doherty, J.P., Dayan, P., Friston, K., Critchley, H. & Dolan, R.J. Temporal difference models and reward-related learning in the human brain. Neuron 38, 329–337 (2003).

Daw, N.D. & Doya, K. The computational neurobiology of learning and reward. Curr. Opin. Neurobiol. 16, 199–204 (2006).

Rushworth, M.F., Mars, R.B. & Summerfield, C. General mechanisms for decision making. Curr. Opin. Neurobiol. 19, 75–83 (2009).

Chowdhury, R. et al. Dopamine restores reward prediction errors in old age. Nat. Neurosci. 16, 648–653 (2013).

Schönberg, T., Daw, N.D., Joel, D. & O'Doherty, J.P. Reinforcement learning signals in the human striatum distinguish learners from nonlearners during reward-based decision making. J. Neurosci. 27, 12860–12867 (2007).

Schönberg, T. et al. Selective impairment of prediction error signaling in human dorsolateral but not ventral striatum in Parkinson's disease patients: evidence from a model-based fMRI study. Neuroimage 49, 772–781 (2010).

Behrens, T.E., Hunt, L.T., Woolrich, M.W. & Rushworth, M.F. Associative learning of social value. Nature 456, 245–249 (2008).

Li, J., Delgado, M.R. & Phelps, E.A. How instructed knowledge modulates the neural systems of reward learning. Proc. Natl. Acad. Sci. USA 108, 55–60 (2011).

Niv, Y., Edlund, J.A., Dayan, P. & O'Doherty, J.P. Neural prediction errors reveal a risk-sensitive reinforcement-learning process in the human brain. J. Neurosci. 32, 551–562 (2012).

Kirsch, I. Response expectancy as a determinant of experience and behavior. Am. Psychol. 40, 1189–1202 (1985).

Wager, T.D. et al. Placebo-induced changes in FMRI in the anticipation and experience of pain. Science 303, 1162–1167 (2004).

Pessiglione, M. et al. Subliminal instrumental conditioning demonstrated in the human brain. Neuron 59, 561–567 (2008).

Schmidt, L., Palminteri, S., Lafargue, G. & Pessiglione, M. Splitting motivation: unilateral effects of subliminal incentives. Psychol. Sci. 21, 977–983 (2010).

Benedetti, F. How the doctor's words affect the patient's brain. Eval. Health Prof. 25, 369–386 (2002).

Fournier, J.C. et al. Antidepressant drug effects and depression severity: a patient-level meta-analysis. J. Am. Med. Assoc. 303, 47–53 (2010).

Kirsch, I. & Sapirstein, G. Listening to Prozac but hearing placebo: a meta-analysis of antidepressant medication. Prev. Treat. 1, 2a (1998).

Bódi, N. et al. Reward-learning and the novelty-seeking personality: a between- and within-subjects study of the effects of dopamine agonists on young Parkinson's patients. Brain 132, 2385–2395 (2009).

Frank, M.J., Seeberger, L.C. & O'Reilly, R.C. By carrot or by stick: cognitive reinforcement learning in parkinsonism. Science 306, 1940–1943 (2004).

Palminteri, S. et al. Pharmacological modulation of subliminal learning in Parkinson's and Tourette's syndromes. Proc. Natl. Acad. Sci. USA 106, 19179–19184 (2009).

Maia, T.V. Reinforcement learning, conditioning, and the brain: successes and challenges. Cogn. Affect. Behav. Neurosci. 9, 343–364 (2009).

Gold, J.M. et al. Negative symptoms and the failure to represent the expected reward value of actions: behavioral and computational modeling evidence. Arch. Gen. Psychiatry 69, 129–138 (2012).

Bamford, N.S. et al. Heterosynaptic dopamine neurotransmission selects sets of corticostriatal terminals. Neuron 42, 653–663 (2004).

Wang, W. et al. Regulation of prefrontal excitatory neurotransmission by dopamine in the nucleus accumbens core. J. Physiol. (Lond.) 590, 3743–3769 (2012).

Bamford, N.S. et al. Dopamine modulates release from corticostriatal terminals. J. Neurosci. 24, 9541–9552 (2004).

Colloca, L. et al. Learning potentiates neurophysiological and behavioral placebo analgesic responses. Pain 139, 306–314 (2008).

Voudouris, N.J., Peck, C.L. & Coleman, G. The role of conditioning and verbal expectancy in the placebo response. Pain 43, 121–128 (1990).

Kordower, J.H. et al. Disease duration and the integrity of the nigrostriatal system in disease. Brain 136, 2419–2431 (2013).

Cools, R., Barker, R.A., Sahakian, B.J. & Robbins, T.W. Enhanced or impaired cognitive function in Parkinson's disease as a function of dopaminergic medication and task demands. Cereb. Cortex 11, 1136–1143 (2001).

Frank, M.J., Seeberger, L.C. & O'Reilly, R.C. By carrot or by stick: cognitive reinforcement learning in parkinsonism. Science 306, 1940–1943 (2004).

Shohamy, D., Myers, C.E., Geghman, K.D., Sage, J. & Gluck, M.A. L-dopa impairs learning, but spares generalization, in Parkinson's disease. Neuropsychologia 44, 774–784 (2006).

Shohamy, D., Myers, C.E., Grossman, S., Sage, J. & Gluck, M.A. The role of dopamine in cognitive sequence learning: evidence from Parkinson's disease. Behav. Brain Res. 156, 191–199 (2005).

Benedetti, F. et al. Placebo-responsive Parkinson patients show decreased activity in single neurons of subthalamic nucleus. Nat. Neurosci. 7, 587–588 (2004).

Benedetti, F. et al. Electrophysiological properties of thalamic, subthalamic and nigral neurons during the anti-parkinsonian placebo response. J. Physiol. (Lond.) 587, 3869–3883 (2009).

Starkstein, S.E. et al. Reliability, validity, and clinical correlates of apathy in Parkinson's disease. J. Neuropsychiatry Clin. Neurosci. 4, 134–139 (1992).

Spielberger, C.D. State-Trait Anxiety Inventory: Bibliography, 2nd edn. (Consulting Psychologists Press, 1989).

Movement Disorder Society Task Force on Rating Scales for Parkinson's Disease. The Unified Parkinson's Disease Rating Scale (UPDRS): status and recommendations. Mov. Disord. 18, 738–750 (2003).

Sutton, R.S. & Barto, A.G. Reinforcement Learning: an Introduction (Bradford Book, 1998).

Lindquist, M.A., Spicer, J., Asllani, I. & Wager, T.D. Estimating and testing variance components in a multi-level GLM. Neuroimage 59, 490–501 (2012).

Mazaika, P., Hoeft, F., Glover, G.H. & Reiss, A.L. Methods and software for fMRI analysis of clinical subjects. Organ. Hum. Brain Mapp 475 (2009).

Hare, T.A., O'Doherty, J., Camerer, C.F., Schultz, W. & Rangel, A. Dissociating the role of the orbitofrontal cortex and the striatum in the computation of goal values and prediction errors. J. Neurosci. 28, 5623–5630 (2008).

Pessiglione, M., Seymour, B., Flandin, G., Dolan, R.J. & Frith, C.D. Dopamine-dependent prediction errors underpin reward-seeking behavior in humans. Nature 442, 1042–1045 (2006).

Behrens, T.E., Hunt, L.T., Woolrich, M.W. & Rushworth, M.F. Associative learning of social value. Nature 456, 245–249 (2008).

Niv, Y., Edlund, J.A., Dayan, P. & O'Doherty, J.P. Neural prediction errors reveal a risk-sensitive reinforcement-learning process in the human brain. J. Neurosci. 32, 551–562 (2012).

Li, J., Delgado, M.R. & Phelps, E.A. How instructed knowledge modulates the neural systems of reward learning. Proc. Natl. Acad. Sci. USA 108, 55–60 (2011).

Maldjian, J.A., Laurienti, P.J., Kraft, R.A. & Burdette, J.H. An automated method for neuroanatomic and cytoarchitectonic atlas–based interrogation of fMRI data sets. Neuroimage 19, 1233–1239 (2003).

Acknowledgements

We thank neurologists P. Greene, R. Alcalay, L. Coté and the nursing staff of the Center for Parkinson's Disease and Other Movement Disorders at Columbia University Presbyterian Hospital for help with patient recruitment and discussion of the findings, N. Johnston and B. Vail for help with data collection, M. Sharp, K. Duncan, D. Sulzer, J. Weber and B. Doll for insightful discussion, and M. Pessiglione and G.E. Wimmer for helpful comments on an earlier version of the manuscript. This study was supported by the Michael J. Fox Foundation and the US National Institutes of Health (R01MH076136).

Author information

Authors and Affiliations

Contributions

D.S. and T.D.W. planned the experiment. L.S., T.D.W. and D.S. developed the experimental design. L.S. and E.K.B. collected data. L.S. analyzed data. D.S. and T.D.W. supervised and assisted in data analysis. L.S., E.K.B., T.D.W. and D.S. wrote the manuscript.

Corresponding authors

Ethics declarations

Competing interests

The authors declare no competing financial interests.

Integrated supplementary information

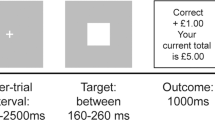

Supplementary Figure 1 Experimental and task design.

(a) Experimental design. Each patient was scanned 3 times: off drug, on placebo, and on drug. Off drug and placebo scan sessions were counterbalanced across patients (order 1: 8 patients; order 2: 10 patients). Scan sessions lasted for 1 h and were separated by a 1 h break. Placebo and dopaminergic medication were crushed into orange juice and administered 30 min before the respective scan session. (b) Task structure. The outcome of a trial could be a gain of $10, nothing ($0), or a loss of $10. Two cue pairs, a gain and a loss cue pair, were randomly intermixed. Within the gain cue pair, optimal choices led to a gain of $10 with a probability of.75 and to nothing ($0) with a probability of.25. Within the loss cue pair, optimal choices led to nothing ($0) with a probability of.75 and to a loss of $10 with a probability of.25.

Supplementary Figure 2 Partial correlations between on drug and placebo controlling for the effects of off drug (n = 18).

Partial correlations between on drug and placebo controlling for effects of off drug for reward learning (left) (r = 0.34, p = 0.08) and motor symptoms (right) (r = 0.59, p = 0.01). On drug and placebo expressed as residuals after confounds due to off drug effects were regressed out.

Supplementary Figure 3 BOLD responses to choices and feedback in the vmPFC and the ventral striatum (n = 15).

(a) Parameter estimates for choices (correct vs. incorrect) from the vmPFC region of interest in the gain and loss condition for off drug (gray), placebo (blue), and on drug (black) treatments. (b) Parameter estimates for feedback (correct vs. incorrect) from the ventral striatum region of interest in the gain and loss condition for the off drug (gray), placebo (blue), and on drug (black) treatments. Error bars represent within subject standard errors.

Supplementary Figure 4 Ventral striatum responses to components of the prediction error (n = 15).

Parameter estimates (betas) from the ventral striatum at time of outcome for the two components of prediction error – expected value and reward – in the off drug (gray), placebo (blue) and on drug (black) treatments. Error bars represent within subject standard errors.

Supplementary Figure 5 Reaction times during learning across conditions and treatment.

Average reaction time in seconds for the gain (left) and loss condition (right) in the off drug (gray), placebo (blue), and on drug (black) treatments. The reaction time curves depict how fast patients chose the optimal choice cue. Error bars represent within-subject standard errors.

Supplementary Figure 6 Behavioral results for the subgroup of patients scanned with fMRI (n = 15).

Percentage of observed optimal choices binned across bocks of 8 trials (left), smoothed (middle), and modeled optimal choices (right) in the gain (top) and loss (bottom) conditions for the off drug (gray), placebo (blue), and on drug (black) treatments. The learning curves depict how often patients chose the 75% rewarding cue (t11 = 5.2, p < 0.001) during the gain condition and the 75% nothing cue (t11 = 4.1, p < 0.01) during the loss condition. Error bars represent within-subject standard errors.

Supplementary Figure 7 Observed and modeled behavioral results for the scanned patients (n = 15).

Percentage of observed behavioral choices (dots) and modeled optimal choices (solid lines) across trials for off drug (gray), placebo (blue), and on drug (black) treatments. The learning curves depict how often patients chose the 75% rewarding cue (t11 = 5.2, p < 0.001) during the gain condition and the 75% loss cue (t11 = −4.1, p < 0.01) during the loss condition. The modeled learning curves represent the probabilities of choice on each trial, as predicted by the RL model.

Supplementary Figure 8 Direct comparisons of value and prediction error responses across treatments.

Statistical parametric maps (SPMs) are superimposed on the average structural scan. (a) SPMs are masked for the vmPFC ROI, defined a priori by MNI = [−1, 27, −8]) from Hare et al. 2008, at p < 0.05 uncorrected. (b) Whole brain activations for value at p < 0.05 uncorrected. (c) SPMs are masked for the ventral striatum ROI, defined a priori by MNI = [−10, 12, −8]) from Pessiglione et al. 2006, at p < 0.05 uncorrected. (d) Whole brain activations for prediction error at p < 0.05 uncorrected.

Supplementary information

Supplementary Text and Figures

Supplementary Figures 1–8 and Supplementary Tables 1–2 (PDF 795 kb)

Supplementary Methods Checklist

(PDF 530 kb)

Rights and permissions

About this article

Cite this article

Schmidt, L., Braun, E., Wager, T. et al. Mind matters: placebo enhances reward learning in Parkinson's disease. Nat Neurosci 17, 1793–1797 (2014). https://doi.org/10.1038/nn.3842

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1038/nn.3842

This article is cited by

-

Open-label placebo treatment does not enhance cognitive abilities in healthy volunteers

Scientific Reports (2023)

-

Forming global estimates of self-performance from local confidence

Nature Communications (2019)

-

Adjunct rasagiline to treat Parkinson’s disease with motor fluctuations: a randomized, double-blind study in China

Translational Neurodegeneration (2018)

-

Evidence for Cognitive Placebo and Nocebo Effects in Healthy Individuals

Scientific Reports (2018)

-

Placebo Intervention Enhances Reward Learning in Healthy Individuals

Scientific Reports (2017)